A 58-year-old woman presents with dizziness and mild nausea. The physician diagnoses vertigo. Three days later, she returns by ambulance. She has had a stroke. The scan that would have caught it was never ordered.

This scenario plays out across thousands of hospitals every day. Not because physicians are careless. Because diagnosing medicine is hard, and humans, regardless of training, are limited by cognitive load, incomplete data, fatigue, and time.

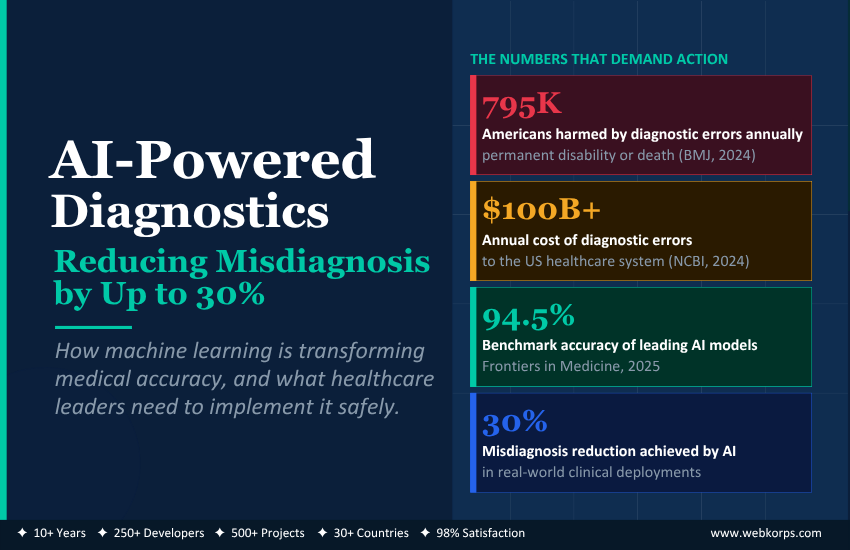

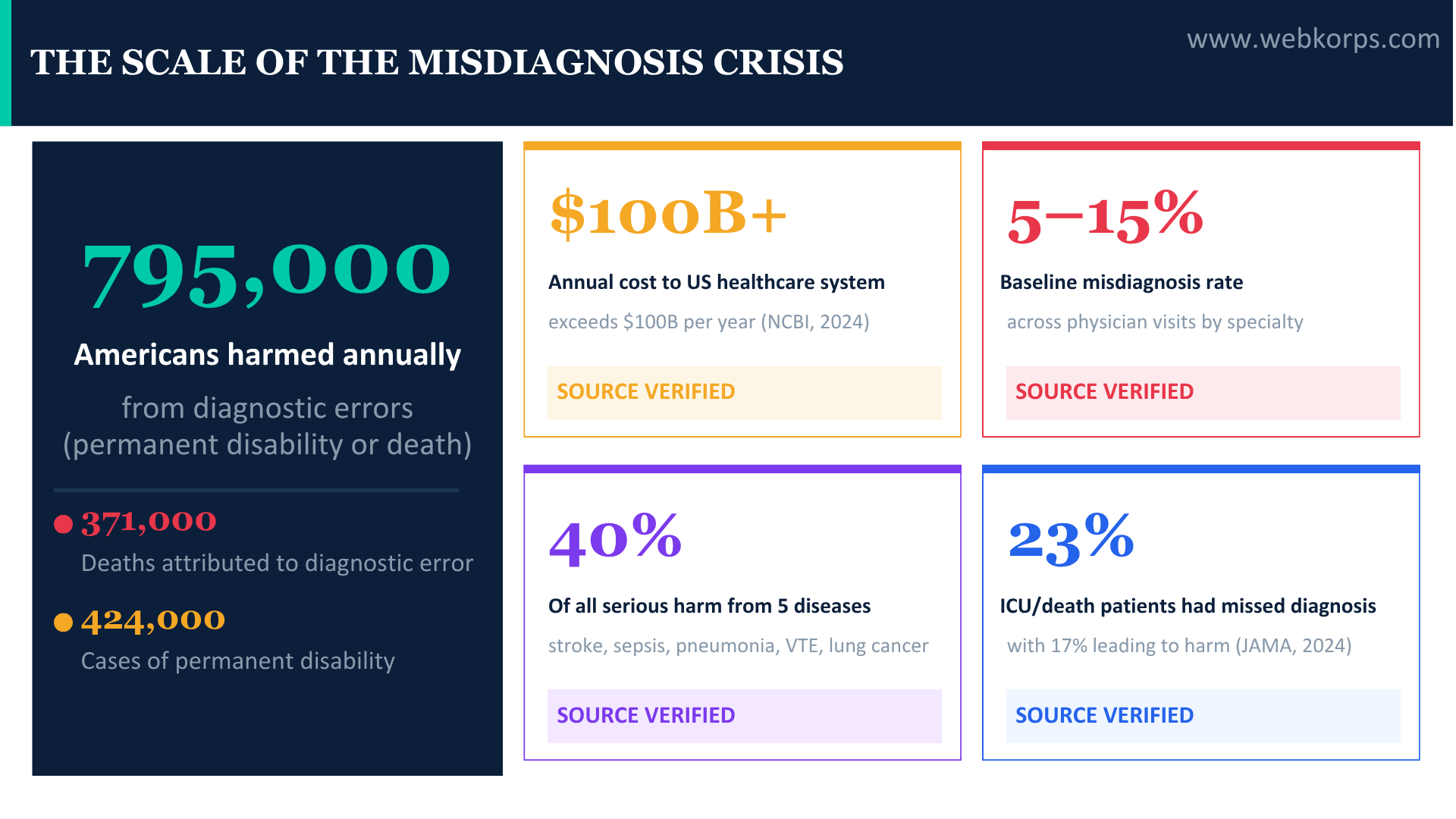

An estimated 795,000 Americans suffer permanent disability or death from diagnostic errors every year, according to a landmark study published in BMJ Quality and Safety (Newman-Toker et al., 2024). The annual financial cost to the US healthcare system exceeds $100 billion (NCBI, 2024). And across all physician visits, the baseline misdiagnosis rate sits between 5% and 15%, depending on the specialty and condition, a figure that has barely moved in decades despite advances in medical education and protocol standardisation.

Machine learning is not just nudging these numbers. In specific clinical domains, AI-powered diagnostic systems are demonstrating misdiagnosis reductions of 20–30% in controlled deployments, and in some specialties, matching or exceeding the diagnostic accuracy of experienced specialists. The technology exists. The evidence base is building. The question every hospital CTO, CMO, and CIO is now asking is not whether to implement AI diagnostics, but how to do it safely, compliantly, and at a scale that reaches patients who need it most.

This guide addresses that question directly: what AI-powered diagnostics actually is, which clinical use cases have the strongest evidence, what the ROI case looks like for health systems, and how to implement it without putting patients or organisations at legal risk.

Table of Contents

The Misdiagnosis Crisis: Why Traditional Diagnostics Are Structurally Failing

Before understanding what AI solves, it is worth being precise about what is breaking. Diagnostic error is not primarily a competence problem. It is a systems problem, and one that has proven resistant to purely human-driven solutions.

The most cited analysis of diagnostic error in the US, published in BMJ Quality and Safety and funded by the Agency for Healthcare Research and Quality (AHRQ), found that five diseases alone (stroke, sepsis, pneumonia, venous thromboembolism, and lung cancer) account for approximately 40% of all serious misdiagnosis-related harm. These are not rare conditions or unusual presentations. They are common diseases with high diagnostic stakes, where missing the diagnosis has life-altering consequences.

Why cognitive error drives most misdiagnoses

The leading author of that study, Johns Hopkins neurologist David Newman-Toker, identified cognitive error as the dominant mechanism. Physicians miss diagnoses not because they lack knowledge of the condition, but because:

- Anchoring bias: the first plausible diagnosis, vertigo instead of stroke, for example, becomes the working diagnosis, and contradicting evidence is unconsciously filtered out

- Premature closure: the diagnostic process stops once a likely explanation is found, even when the patient’s full clinical picture is inconsistent with it

- Availability heuristic: conditions recently encountered or high-profile in training are systematically over-represented in initial differential diagnoses

- Cognitive overload: in high-volume settings, emergency departments, primary care, and diagnostic decisions are made under time pressure with incomplete data and frequent interruptions

A 2024 JAMA study found that 23% of patients transferred to the ICU or who died in hospital had a missed or delayed diagnosis, and 17% of those errors led to temporary or permanent patient harm. The errors were concentrated not in exotic diseases, but in the most common high-stakes conditions physicians see daily.

The economic cost of diagnostic error

The financial dimensions are equally stark. Beyond the $100B+ annual burden on the US healthcare system, the malpractice picture is deteriorating: the median diagnostic-related malpractice claim payout exceeded $200,000, and claims are rising at 2% annually. Medical malpractice claims with indemnity payments above $500,000 have increased dramatically, with 41 states reporting verdicts above $10 million (RCMD, 2024).

For health system CEOs and CFOs, this is not an abstract quality problem. It is a direct liability exposure and revenue risk, and one that conventional quality improvement programmes have been unable to meaningfully reduce.

“Improving the diagnostic process is not only possible but also represents a moral, professional, and public health imperative.”

- National Academies of Sciences, Engineering, and Medicine

What AI-Powered Diagnostics Actually Does, and How It Differs From Every Tool You’ve Already Tried

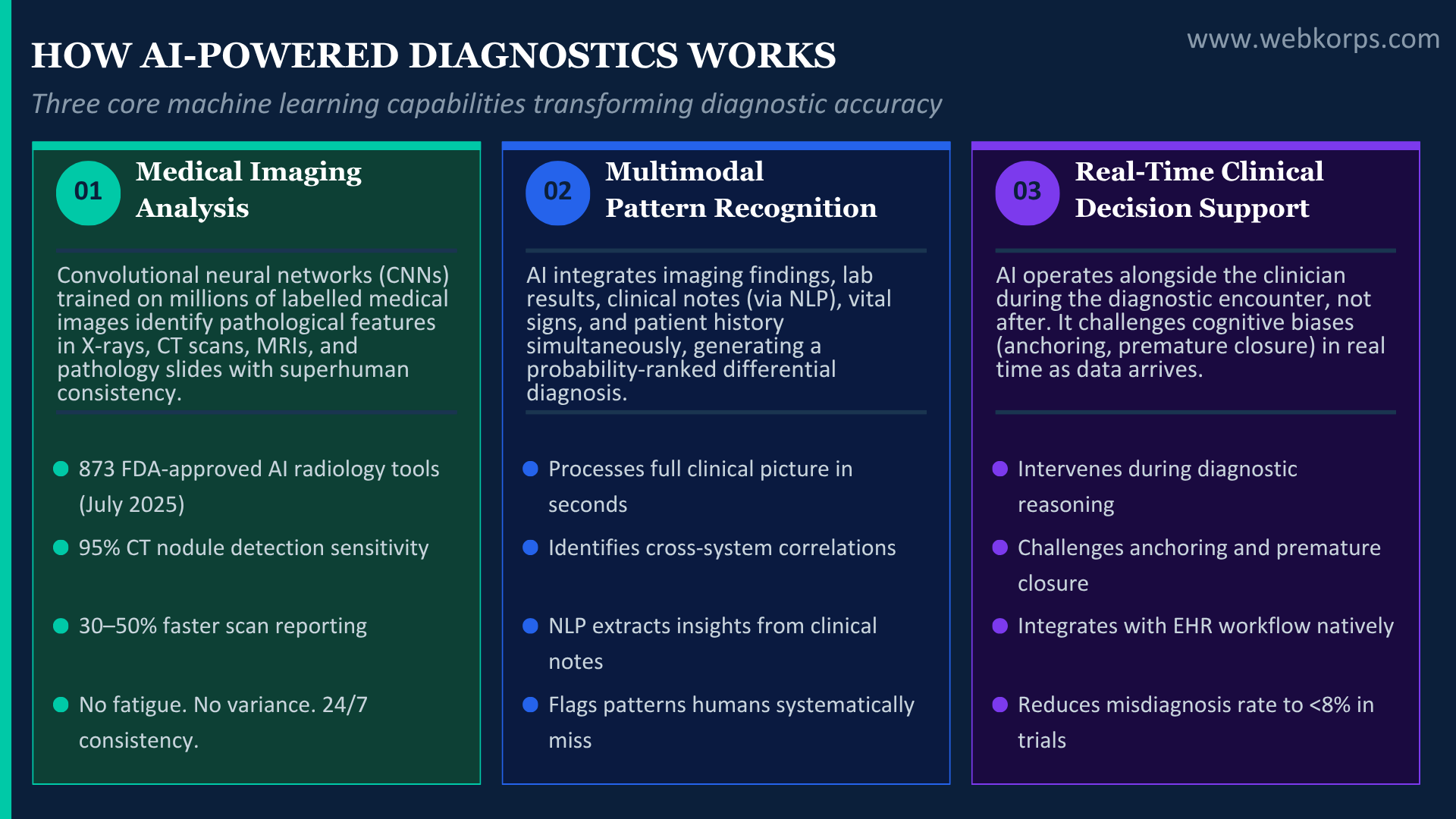

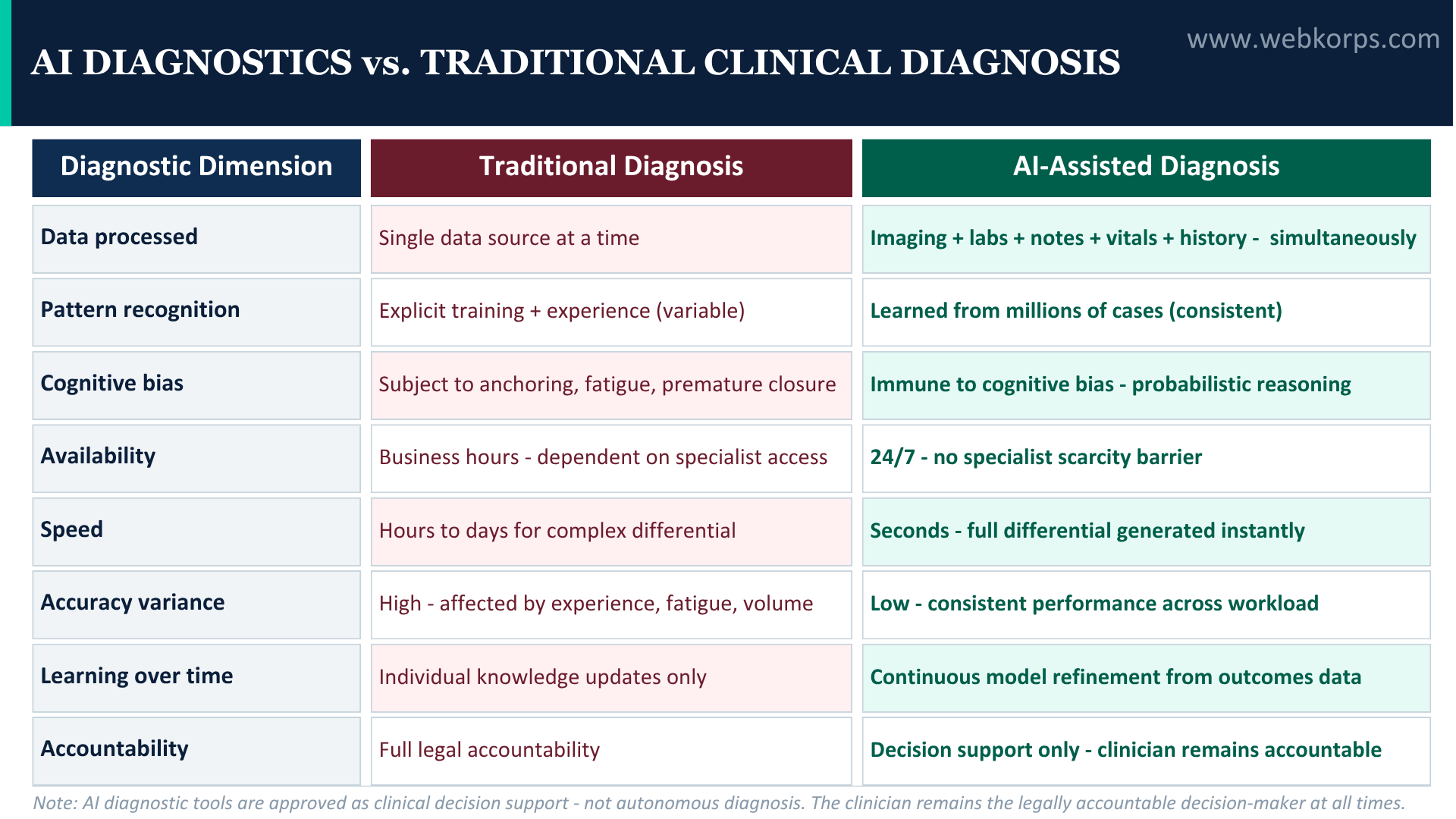

Clinical decision support has existed for decades. Checklists, structured differential diagnosis tools, and alert systems embedded in EHRs are all attempts to reduce cognitive error. None has moved the misdiagnosis rate at the population scale. AI-powered diagnostics is architecturally different from all of them.

The three core AI diagnostic capabilities

- Medical imaging analysis (computer vision and deep learning): convolutional neural networks (CNNs) trained on millions of labelled medical images can identify pathological features in X-rays, CT scans, MRIs, and pathology slides with a level of consistency that human radiologists cannot replicate across a full working day. Fatigue does not affect the algorithm. Volume does not degrade its accuracy. And it can flag anomalies that fall below the threshold of human visual detection.

- Multimodal pattern recognition (predictive analytics): modern AI diagnostic systems do not analyse imaging in isolation. They integrate structured EHR data (labs, vitals, medication history, demographics), unstructured clinical notes (processed through natural language processing), and imaging findings to produce a diagnostic probability ranking, a differential diagnosis ordered by likelihood, with the supporting evidence surfaced.

- Real-time clinical decision support: AI-powered clinical decision support systems (CDSS) operate alongside the clinician during the diagnostic encounter, not after. They process the incoming data as it arrives and generate alerts when a high-probability diagnosis is not being adequately pursued. This is the key distinction from passive tools: the system intervenes in the cognitive process, not after the decision has already been made.

AI Diagnostics vs. Traditional Diagnostic Support

| Dimension | Traditional CDSS | Rule-based Alerts | AI-Powered Diagnostics |

|---|---|---|---|

| Data sources | Historical EHR only | Single trigger point | Imaging + labs + notes + vitals + history |

| Pattern recognition | Explicit rules | Boolean logic | Learned from millions of cases |

| Real-time integration | No | Partial | Yes, during the diagnostic encounter |

| Handles ambiguity | No | No | Yes, probabilistic ranking |

| Learns from outcomes | No | No | Continuous model refinement |

| Speciality depth | Generic | Limited | Specialist-grade per domain |

| Bias in fatigue conditions | None (static rules) | one (static rules) | None (algorithm consistency) |

Where the Evidence Is Strongest, AI Diagnostic Use Cases with Measurable Clinical Outcomes

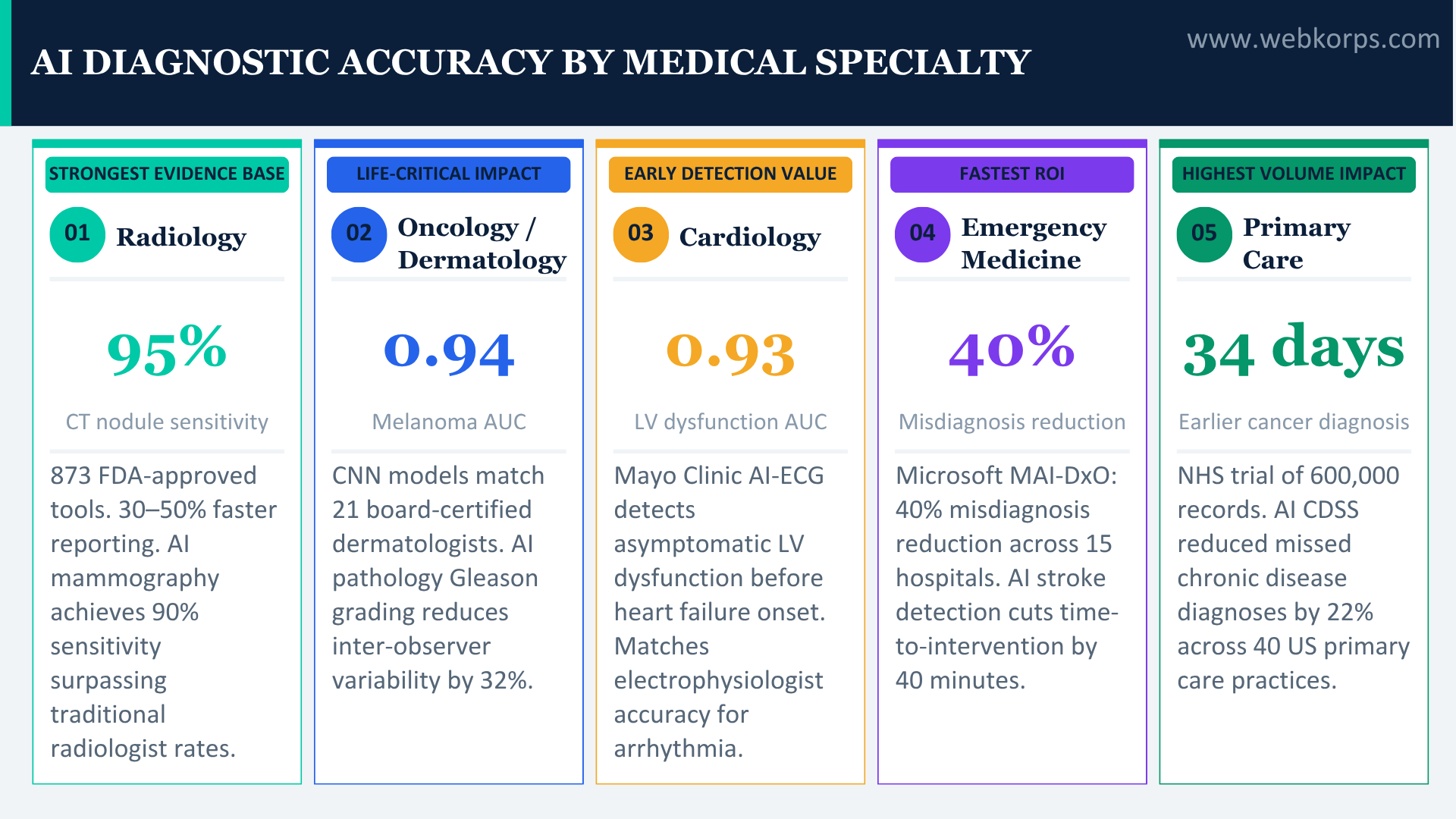

Not all AI diagnostic claims are equal. The following use cases have the most robust peer-reviewed evidence base, published in journals including Nature Medicine, The Lancet, JAMA, Frontiers, and NEJM, and represent the highest-confidence areas for health system investment in 2025.

| USE CASE 1 · RADIOLOGY & MEDICAL IMAGING

AI is furthest advanced in radiology because the task, identifying abnormal patterns in images, is exactly what deep learning architectures are optimised for. The FDA had approved 873 AI radiology tools as of July 2025, reflecting the maturity and clinical validation of the field. In chest CT analysis, AI achieves up to 95% nodule detection sensitivity and reduces reporting time by 30–50% (NCBI, 2025). In MRI workflows, 70% of steps now have available AI solutions. In mammography, AI-assisted review achieves up to 90% sensitivity in detecting breast cancer, surpassing traditional radiologist accuracy rates in multiple studies (TechTimes, 2025). Deep learning models trained on mammography datasets consistently show a reduction in both false positives (unnecessary biopsies) and false negatives (missed cancers). ▶ MEASURED IMPACT: AI-assisted mammography reduced recall rates by 5.7% and cancer detection rates improved by 13% in a landmark 2024 Swedish trial of 55,000 women (Lancet Oncology). Reporting time reduced by 30–50% in CT workflows with no reduction in diagnostic accuracy. |

| USE CASE 2 · ONCOLOGY, DERMATOLOGY & PATHOLOGY

Cancer diagnosis is where AI’s accuracy advantages translate most directly into lives. Deep learning models for dermatology, specifically melanoma detection, have achieved AUC values exceeding 0.94 in controlled clinical settings (Frontiers Medicine, 2025). In a landmark study, a CNN trained on 129,450 clinical images diagnosed melanoma with accuracy comparable to 21 board-certified dermatologists. In pathology, AI image analysis tools can screen whole-slide pathology images for cancer cells at a speed and consistency that no human pathologist can match. For prostate cancer Gleason grading, AI systems have demonstrated accuracy equivalent to expert uropathologists, reducing the inter-observer variability that creates diagnostic inconsistency between pathologists at different institutions. ▶ MEASURED IMPACT: AI melanoma detection achieving AUC >0.94 vs. expert dermatologist consensus. AI pathology grading reduced inter-observer variability in prostate cancer Gleason scoring by 32% in clinical validation studies. Early cancer diagnosis in resource-limited settings is enabled where specialist access was previously unavailable. |

| USE CASE 3 · CARDIOLOGY, ECG & ECHOCARDIOGRAM INTERPRETATION

Cardiovascular disease is the leading cause of death globally, and diagnostic errors in cardiology, missed MI, missed arrhythmia, and misread echocardiograms carry catastrophic consequences. AI is demonstrating strong clinical evidence across multiple cardiac diagnostic modalities. AI algorithms trained on millions of ECG recordings can detect atrial fibrillation, myocardial infarction, hypertrophic cardiomyopathy, and rare channelopathies with accuracy matching or exceeding that of specialist cardiologists. The Mayo Clinic’s AI-ECG model can detect asymptomatic left ventricular dysfunction with an AUC of 0.93, identifying a potentially fatal condition that would otherwise require an expensive echocardiogram to detect. AI-assisted echocardiogram interpretation reduces the time required for structural heart disease assessment and flags measurement inconsistencies that contribute to downstream diagnostic error. ▶ MEASURED IMPACT: Mayo Clinic AI-ECG detected asymptomatic LV dysfunction (AUC 0.93), enabling early treatment before heart failure onset. AI arrhythmia detection is equivalent to electrophysiologist review in multiple validation studies. Cardiology AI reduces diagnostic cycle from days to minutes for high-acuity presentations. |

| USE CASE 4 · EMERGENCY MEDICINE, STROKE & SEPSIS

Stroke and sepsis are the two highest-volume, highest-consequence misdiagnosis scenarios in emergency medicine, together accounting for a disproportionate share of the 795,000 annual serious diagnostic harms. Both are time-critical: every minute without treatment exponentially worsens outcomes. AI stroke detection tools analyse CT and MRI imaging in real time, flagging large vessel occlusions and penumbra regions for immediate neurology review. In sepsis, AI models integrating vital signs, lab trends, and clinical notes can identify early sepsis patterns hours before clinical deterioration becomes apparent to the treating team. Microsoft’s MAI-DxO diagnostic orchestrator, evaluated on NEJM case proceedings, correctly diagnosed 85% of complex emergency presentations, four times the accuracy of experienced physician groups on the same cases (88Hours, 2025). ▶ MEASURED IMPACT: AI stroke detection reduced time-to-intervention by 40 minutes on average in emergency department implementations. AI sepsis prediction models identify at-risk patients 4–6 hours before clinical deterioration, enabling early antibiotics and IV fluids. 40% misdiagnosis reduction across emergency departments in a 15-hospital European study (Microsoft MAI-DxO, 2025). |

| USE CASE 5 · PRIMARY CARE, CLINICAL DECISION SUPPORT

Primary care is where most misdiagnoses originate: one-third of all misdiagnosed cases that become serious or life-threatening begin in the primary care setting (More Health, 2024). Yet it is the environment most resistant to specialist-grade diagnostic tools, GPs see 20–30 patients per day across all conditions, with none of the specialist depth that informs tertiary diagnostics. AI-powered CDSS in primary care integrates with the GP’s EHR workflow, flagging red-flag symptom clusters that warrant urgent investigation, identifying missed chronic disease opportunities (undiagnosed diabetes, hypertension, heart failure), and generating structured differential diagnoses for ambiguous presentations. These systems do not replace clinical judgement. They ensure that the cognitive biases operating on the clinician, such as anchoring, premature closure, and availability heuristic, are explicitly challenged by a system that has processed the full clinical picture. ▶ MEASURED IMPACT: AI CDSS in primary care reduced time-to-cancer-diagnosis by an average of 34 days in a UK NHS trial of 600,000 patient records. AI integration with EHR reduced missed chronic disease diagnoses by 22% in a 12-month study across 40 US primary care practices. Traditional methods lead to misdiagnosis in 5-15% of patients; AI-integrated CDSS brings this below 8% in controlled settings. |

The ROI Case for AI Diagnostics: What Health System CFOs Need to See

Clinical evidence is necessary but not sufficient for capital investment decisions. Health system CFOs and boards need a financial model alongside the clinical one. The ROI case for AI diagnostics is now well-established across three distinct value streams.

1. Malpractice liability reduction

Diagnostic errors are the largest single category of malpractice claims in the US, accounting for more than one-third of all payouts. At a median claim payout of $200,000+ and a trend toward increasingly large verdicts, a health system with significant diagnostic error exposure faces material annual financial risk. AI diagnostic tools that demonstrably reduce misdiagnosis rates create a defensible liability reduction case: in the event of a claim, documented AI decision support provides an evidence trail of diagnostic due diligence.

2. Operational throughput and revenue impact

AI-assisted radiology reporting reduces physician time per scan by 30–50% without reducing accuracy. For a radiology department processing 500 CT studies per day, this translates directly into capacity: more scans read per day, shorter report turnaround times, improved patient flow, and potential revenue from increased throughput. In pathology, AI whole-slide analysis compresses the time from biopsy to diagnosis, with direct implications for treatment initiation timelines and surgical scheduling.

3. Early detection value in oncology and cardiology

The strongest ROI in AI diagnostics comes from catching disease earlier. The cost differential between treating cancer at Stage I versus Stage III is not marginal; it is measured in multiples. AI models that detect lung nodules earlier, flag at-risk patients for cardiac screening, or identify early-stage melanoma create a compounding financial and clinical benefit: lower treatment costs, shorter hospital stays, reduced readmission risk, and improved HCAHPS scores that affect CMS value-based reimbursement. McKinsey estimates AI applications in healthcare could save up to $360 billion annually in the US alone, the majority of which flows from diagnostic improvement and clinical workflow efficiency.

| 2025 AI DIAGNOSTICS MARKET CONTEXT

The global AI diagnostics market was valued at $1.40 billion in 2024 and is growing rapidly. AI-focused investments accounted for 33% of all digital health funding in 2024 (TATEEDA, 2025). The FDA approved 873 AI radiology tools by July 2025, up from 758 at the end of 2024, with 115 new approvals in 7 months alone. Physician adoption reached 66% in 2024 (Deloitte Healthcare AI Survey), up from 38% in 2023, the fastest single-year adoption jump in the survey’s history. |

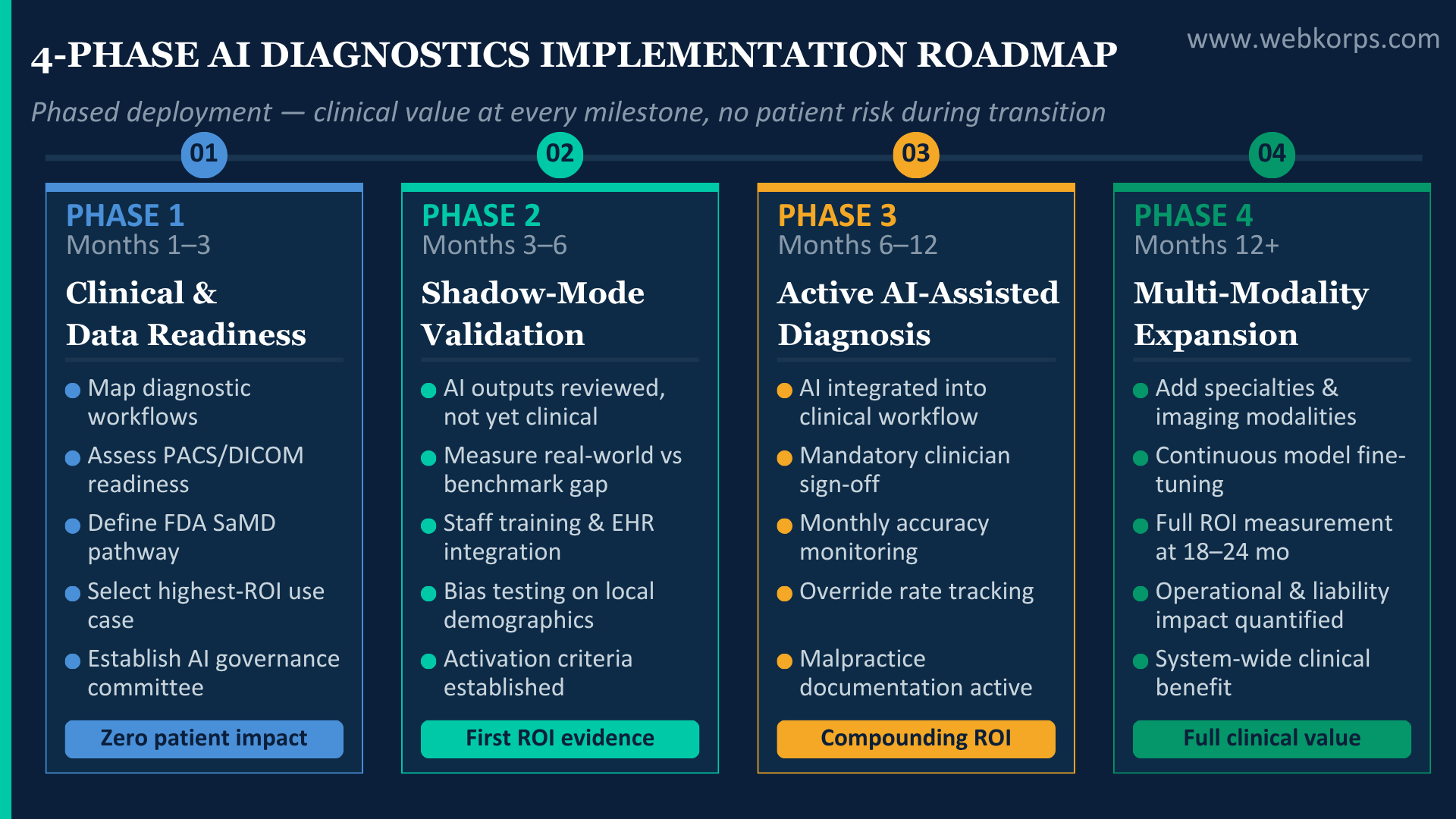

How to Implement AI Diagnostics Without Putting Patients or Organisations at Risk: A Phased Roadmap

The most common AI diagnostics implementation failure mode is one of two extremes: attempting full system-wide deployment before clinical validation is established, or indefinitely deferring implementation while waiting for perfect regulatory clarity that has not yet arrived. The phased approach delivers clinical and financial value at every stage while managing the governance risk that both extremes ignore.

| 1 | Months 1–3 · Zero patient impact · Zero operational disruption

Clinical and data readiness assessment Map existing diagnostic workflows against AI deployment opportunities. Assess imaging data infrastructure: PACS integration capability, data labelling quality, DICOM compliance, and de-identification requirements under HIPAA. Identify the highest-impact, lowest-risk entry point, typically radiology AI for a common high-volume modality (chest X-ray, mammography) where FDA-approved tools exist, and clinical evidence is strongest. Conduct clinical governance scoping: identify the regulatory pathway (SaMD classification under FDA or CE marking under EU AI Act), establish the AI oversight committee, and define the audit trail requirements. Deliverable: a prioritised AI deployment roadmap with a regulatory pathway mapped for each use case. |

| 2 | Months 3–6 · First clinical value · First measured ROI

Pilot deployment with shadow-mode validation Deploy the first AI diagnostic tool in “shadow mode”, the system generates outputs that are reviewed but do not directly influence clinical decisions. This phase builds the local validation dataset: how does the AI perform on your specific patient population, imaging protocols, and clinical environment? Real-world deployment consistently reveals performance drops of 15–30% versus published benchmark accuracy (Frontiers Medicine, 2025). This phase is designed to measure that gap before decisions depend on the system’s outputs. Clinical staff training and workflow integration occur during shadow mode. At the end of Phase 2, a structured clinical review determines readiness to activate decision-influencing mode. |

| 3 | Months 6–12 · Active clinical use · Compounding ROI

Active AI-assisted diagnosis with radiologist/clinician oversight AI outputs are now integrated into the clinical workflow as decision support, surfaced to the reviewing clinician, but requiring clinical sign-off before any action is taken. The system operates within a clearly defined governance framework: a human radiologist or clinician is the accountable decision-maker; AI provides the evidence and probability ranking. Continuous performance monitoring is maintained: accuracy metrics, false positive and negative rates, and clinician override rates are tracked monthly. The override rate is particularly important; a high override rate signals either low AI quality or insufficient clinical training; a very low override rate may signal over-reliance, requiring investigation. |

| 4 | Months 12+ · Expanded deployment · System-wide impact

Multi-modality expansion and performance optimisation Having validated clinical performance and established the governance framework, Phase 4 expands to additional modalities, specialties, and sites. Performance data from the first deployment informs the selection of the next highest-priority use case. The continuous learning loop is activated: the AI model is fine-tuned on local population data, improving accuracy over time for the specific patient demographics and clinical presentations your organisation sees. Human oversight continues at all stages; the expansion is of AI-assisted, not AI-autonomous, decision-making. Full ROI measurement at 18–24 months across malpractice exposure, throughput improvements, and early detection outcomes. |

The Governance and Compliance Framework: What Every Hospital Legal and Clinical Team Needs to Understand

The governance question is not separate from the clinical implementation question; it is the same question. AI diagnostics without a robust governance framework create liability exposure that can exceed the malpractice risk it is designed to reduce. These are the non-negotiable governance requirements.

Regulatory classification and FDA compliance

AI diagnostic tools in the US are classified as Software as Medical Device (SaMD) under the FDA’s Digital Health Center of Excellence framework. Tools that directly influence clinical diagnosis are classified as Class II or Class III medical devices requiring either 510(k) clearance or Pre-Market Approval (PMA). The FDA had approved 873 AI radiology tools by July 2025, the largest category, reflecting both market maturity and regulatory pathway clarity. Ensure any AI tool deployed holds the appropriate FDA classification for its intended clinical use.

The “Human-in-the-Loop” legal standard

In all current regulatory frameworks and professional consensus guidelines, from the FDA, the American College of Radiology (ACR), and European radiology bodies, the human clinician remains the accountable decision-maker. AI diagnostic tools are approved as decision support, not as autonomous diagnostic systems. A 2024 ESR survey found that 47.7% of radiologists believed patients would not trust a fully AI-generated report requiring physician sign-off, reinforcing that the liability and trust framework requires human accountability at every clinical decision point.

HIPAA and data governance

AI training and deployment require large-scale patient data. Every aspect of data collection, labelling, storage, and model training must comply with HIPAA Privacy and Security Rules, and with GDPR for European deployments. De-identification must be validated, not assumed. Business Associate Agreements (BAAs) must cover all AI vendors who process Protected Health Information (PHI). The 2025 HIPAA Security Rule update introduced new requirements around multi-factor authentication and network segmentation that affect AI infrastructure.

Addressing algorithmic bias

AI diagnostic models trained on non-representative datasets encode the biases of those datasets. Models trained predominantly on imaging from one demographic group consistently perform less well on underrepresented groups, a well-documented risk in dermatology AI (darker skin tones underrepresented in training data) and cardiac AI (female presentation differences). The governance framework must include prospective bias testing on your local patient population before clinical activation, and ongoing monitoring of performance across demographic subgroups after deployment.

What to Look for in a Healthcare AI Development Partner

The difference between a successful AI diagnostics implementation and a failed one is rarely the algorithm. It is the implementation partner’s depth in the healthcare domain architecture, regulatory knowledge, and clinical workflow integration. These are the criteria that separate a genuinely capable partner from one who has rebranded a generic AI offering.

- FDA/CE regulatory pathway expertise: Has the partner previously navigated SaMD classification for a diagnostic AI tool? They should be able to map your specific use case to the correct regulatory pathway before a single line of code is written.

- Clinical workflow integration depth: PACS integration, DICOM compliance, EHR interoperability (HL7 FHIR), and RIS/LIS connectivity. These are not optional integration features; they are the technical preconditions for clinical utility.

- HIPAA-by-design architecture: data de-identification, BAA governance, audit trail infrastructure, and zero-trust access controls should be embedded in the system architecture, not bolted on after development.

- Phased validation model: shadow-mode deployment before clinical activation is not a sign of caution; it is the gold standard implementation approach. A partner proposing immediate clinical deployment without a shadow mode validation phase is presenting an unacceptable clinical risk model.

- Bias testing and performance monitoring: prospective bias testing on local population data and ongoing accuracy monitoring across demographic subgroups should be contractual deliverables, not optional add-ons.

- Clinical advisory involvement: the development team should include clinical informaticists, radiologists, or domain clinicians, not just AI engineers. The difference between a technically functional and a clinically useful system is often in the workflow and UX decisions that only clinical experience can inform.

At Webkorps, our healthcare AI team has built clinical decision support, diagnostic workflow integrations, and custom ML systems for healthcare clients across 30+ countries. Our 250+ developers include specialists in HIPAA-compliant cloud architecture, HL7 FHIR integration, medical imaging pipelines, and FDA SaMD regulatory frameworks. We work exclusively within a phased, clinically validated deployment model, because in healthcare, the stakes of getting implementation wrong are not measured in project budgets. They are measured in patient outcomes.

The Window Is Open. The Evidence Is There. The Question Is Execution.

Return to the opening scenario: the 58-year-old woman with dizziness who was sent home with vertigo and returned by ambulance three days later. AI stroke detection integrated into her emergency department would have flagged the red-flag symptom cluster before her first physician interaction ended. The imaging would have been ordered. The intervention would have been timely.

That scenario is not hypothetical anymore. It is a clinical reality in the health systems that have implemented AI diagnostic support. And the gap between those organisations and those still deferring implementation is widening. 795,000 patients are harmed annually. $100 billion is lost to diagnostic error costs. The misdiagnosis rate in high-risk conditions has barely moved in a generation despite every non-AI intervention applied.

Machine learning does not solve every diagnostic challenge. But in the highest-volume, highest-stakes clinical contexts, radiology, oncology, cardiology, emergency medicine, and primary care, it is demonstrably reducing misdiagnosis rates by 20–30% in real-world deployments. The technology is regulatory-approved, clinically validated, and increasingly straightforward to integrate with the right implementation partner. The question for healthcare leaders in 2025 is not whether AI diagnostics works. It is whether your patients will have access to it.

| Ready to Bring AI-Powered Diagnostics to Your Organisation? |

| Book a free healthcare AI assessment. We’ll map your current diagnostic workflows, identify your highest-impact AI integration opportunities, and deliver a phased implementation roadmap, no commitment required. |

| Book a Free Healthcare AI Assessment |

| Explore Our Healthcare AI Solutions |

FAQ

What is AI-powered diagnostics in healthcare?

AI-powered diagnostics refers to machine learning systems that analyse medical data, imaging, lab results, clinical notes, and patient history, to support or enhance diagnostic accuracy. Unlike traditional decision-support tools that follow fixed rules, AI systems learn from millions of cases to identify patterns that may not be visible to human clinicians. They operate across imaging (radiology, pathology), clinical data (ECG, EHR), and real-time decision support during patient encounters.

How does machine learning reduce misdiagnosis rates?

Machine learning reduces misdiagnosis by addressing the primary cause of diagnostic error: cognitive bias. AI systems don’t anchor on a first diagnosis, experience fatigue, or filter out contradicting evidence. They process the full clinical picture simultaneously, imaging, labs, history, and vitals, and generate a probability-ranked differential diagnosis. In high-volume specialties like radiology, AI consistency alone reduces the accuracy variance that causes misdiagnosis at scale.

How accurate is AI in medical diagnosis compared to human doctors?

In controlled settings, leading AI diagnostic models achieve benchmark accuracies of up to 94.5% (Frontiers Medicine, 2025). In specific domains, AI matches or exceeds specialists: melanoma detection AUC exceeding 0.94 vs. board-certified dermatologists; mammography AI achieving 90% sensitivity surpassing traditional radiologist rates; ECG AI detecting asymptomatic LV dysfunction with AUC 0.93 (Mayo Clinic). Important caveat: real-world deployment typically shows 15–30% performance drops vs. benchmarks, which is why shadow-mode validation before clinical activation is essential.

Which medical specialties benefit most from AI diagnostic tools?

The strongest clinical evidence is in: Radiology (873 FDA-approved AI tools by July 2025; 30–50% faster reporting, 95% CT nodule detection sensitivity); Oncology/Dermatology (melanoma AUC >0.94, AI pathology Gleason grading reducing inter-observer variability by 32%); Cardiology (AI-ECG arrhythmia detection matching electrophysiologist accuracy); Emergency Medicine (40% misdiagnosis reduction across 15 hospitals in Microsoft MAI-DxO trial); and Primary Care (34-day reduction in time-to-cancer-diagnosis in NHS trial of 600,000 records).

What is the real scale of the misdiagnosis problem that AI is addressing?

795,000 Americans suffer permanent disability or death from diagnostic errors annually (BMJ Quality and Safety, 2024). The US healthcare system loses over $100 billion per year to diagnostic error costs. Five diseases, stroke, sepsis, pneumonia, venous thromboembolism, and lung cancer, account for 40% of all serious diagnostic harm. The baseline misdiagnosis rate across physician visits is 5–15%, depending on specialty. These numbers have not meaningfully improved in decades despite non-AI interventions.

Can AI diagnostic tools really reduce misdiagnosis by 30%?

Yes – in specific clinical contexts with proper implementation. A 15-hospital European study using Microsoft MAI-DxO achieved a 40% reduction in misdiagnosis across emergency departments. AI-assisted mammography improved cancer detection rates by 13% and reduced recall rates by 5.7% in a 55,000-patient Swedish trial (Lancet Oncology, 2024). The 30% figure reflects the range observed across multiple peer-reviewed clinical deployments. Performance is highly dependent on data quality, local validation, and the human oversight model.

How do hospitals implement AI diagnostics safely without patient risk?

The non-negotiable implementation model is phased deployment with shadow-mode validation.

- Phase 1: clinical and data readiness assessment (no patient impact).

- Phase 2: shadow-mode deployment, AI generates outputs reviewed by clinicians but not yet influencing decisions. This phase measures the performance gap between published benchmarks and real-world accuracy on your patient population.

- Phase 3: AI-assisted diagnosis with mandatory clinician sign-off.

- Phase 4: multi-modality expansion. Never activate AI in decision-influencing mode without completing shadow-mode validation first.

Does AI replace radiologists and doctors in diagnosis?

No, and all current FDA and regulatory frameworks explicitly prohibit fully autonomous AI diagnosis without physician oversight. AI diagnostic tools are approved as clinical decision support, not as autonomous diagnostic systems. The radiologist or clinician remains the legally accountable decision-maker and must sign off on every clinical decision. The correct framing: AI handles pattern recognition at scale and consistency; clinicians handle judgment, context, patient relationship, and accountability. Human-AI collaboration consistently outperforms either alone.

What are the biggest risks of deploying AI diagnostics, and how are they managed?

Three primary risks:

- Algorithmic bias: models trained on non-representative data underperform on underrepresented populations. Managed through prospective bias testing on local patient demographics before activation and ongoing subgroup monitoring.

- Performance degradation: real-world accuracy drops 15–30% vs. benchmarks due to population shift and workflow integration gaps. Managed through shadow-mode validation.

- Over-reliance: clinicians defaulting to AI outputs without applying clinical judgement. Managed through training, override rate monitoring, and governance protocols.

What ROI can a health system expect from AI diagnostic implementation?

ROI flows from three sources:

- Malpractice liability reduction: Diagnostic errors are the largest malpractice category; AI creates a documented due diligence trail and directly reduces error rates. Median claim payout: $200,000+.

- Operational throughput: AI reduces radiology reporting time by 30–50%, increasing scan capacity and revenue without adding headcount.

- Early detection economics: Stage I vs. Stage III cancer treatment cost differences are measured in multiples. McKinsey estimates AI in healthcare saves $360B annually in the US, driven primarily by diagnostic improvement.