An Indian healthtech startup is building an AI-powered telemedicine platform. Their core market is India, but two of their three largest hospital clients are processing data for patients in the United States through their telemedicine arms. Their AI diagnostic module is trained on a dataset that includes both Indian and American patient records.

Which compliance framework governs this company?

The answer: both. And the rules are not the same.

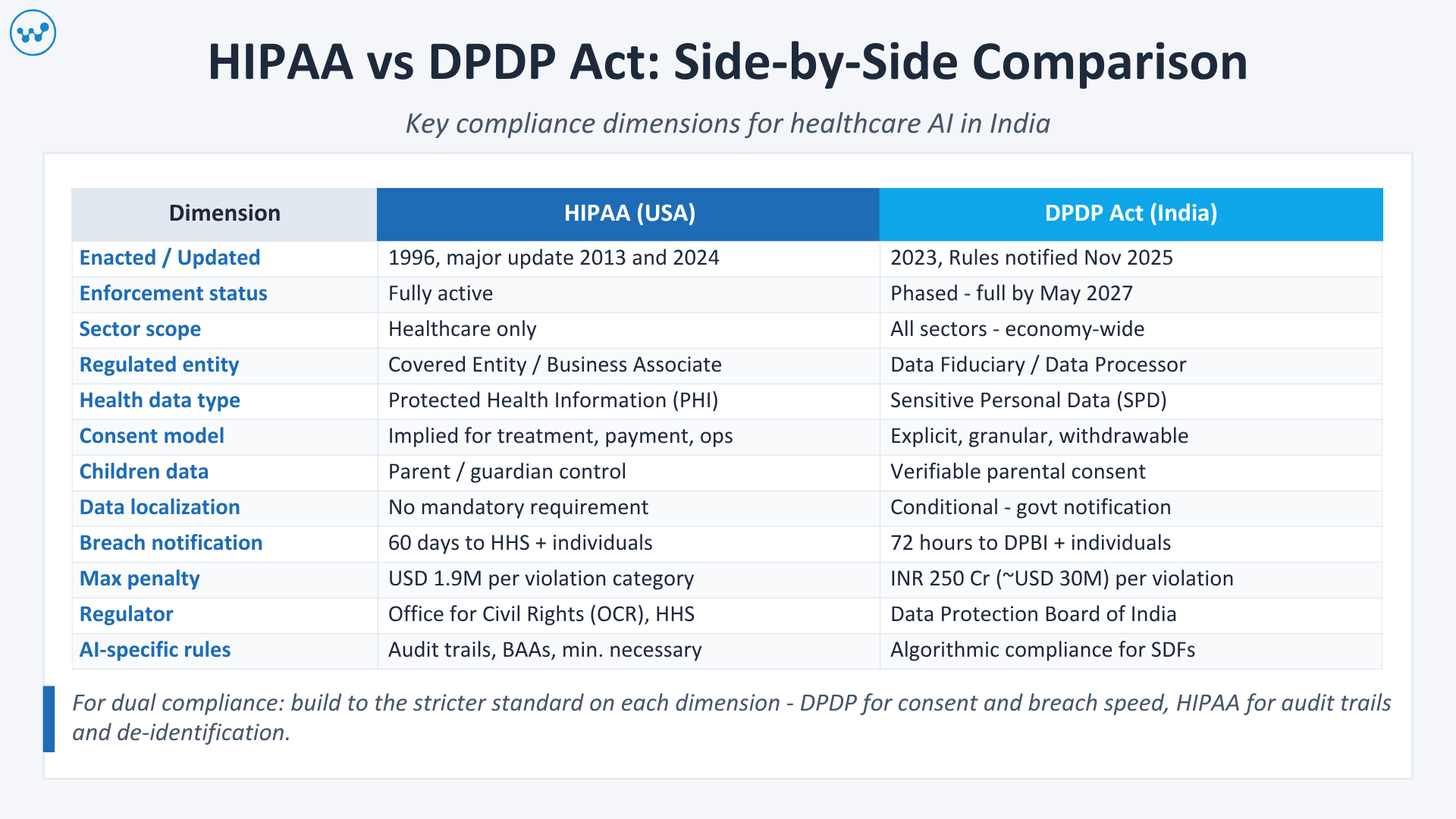

India’s Digital Personal Data Protection (DPDP) Act, 2023 – with its Rules notified in November 2025 and full enforcement expected by May 2027 – is now an active compliance regime. The United States’ Health Insurance Portability and Accountability Act (HIPAA), meanwhile, has been the global benchmark for healthcare data protection for over two decades and applies to any entity that handles Protected Health Information (PHI) for US patients, regardless of where in the world that entity is based.

For Indian healthcare AI companies, health IT developers, and global companies building for the Indian market, the need to understand both frameworks simultaneously has never been more urgent. The cost of getting it wrong is steep: HIPAA violations can cost up to USD 1.9 million per violation category per year, while DPDP Act non-compliance can attract fines of up to INR 250 crore (approximately USD 30 million).

This guide gives you a clear, side-by-side comparison of every dimension that matters, scope, definitions, consent requirements, data localization, breach notification, penalties, and AI-specific obligations, so you can build healthcare AI that is compliant in both jurisdictions without building two separate compliance architectures.

Table of Contents

Quick Reference: HIPAA vs DPDP at a Glance

| Dimension | HIPAA (USA) | DPDP Act (India) |

| Year enacted | 1996 (major updates 2013, 2024) | 2023 (Rules: November 2025) |

| Full enforcement | Active | Phased – full by May 2027 |

| Scope | Sector-specific (healthcare only) | Economy-wide (all sectors) |

| Regulated entity name | Covered Entity / Business Associate | Data Fiduciary / Data Processor |

| Health data classification | Protected Health Information (PHI) | Sensitive Personal Data (SPD) |

| Basis for processing | Specific permitted uses (treatment, payment, operations) | Consent or legitimate state use |

| Consent model | Implied for treatment purposes | Explicit, affirmative, granular |

| Data localization | No mandatory localization | Conditional – government notification-based |

| Breach notification deadline | 60 days to HHS; 60 days to individuals | 72 hours to the Data Protection Board |

| Maximum penalty | USD 1.9 million per violation category per year | INR 250 crore (~USD 30M) per violation |

| AI-specific rules | Audit trails, BAAs for AI vendors | Algorithmic compliance for Significant Data Fiduciaries |

| Regulatory body | Office for Civil Rights (OCR), HHS | Data Protection Board of India (DPBI) |

| Cross-border applicability | Extraterritorial – applies wherever US patient data is handled | Extraterritorial – applies to entities serving Indian users |

Who Must Comply With What And When

HIPAA: Who Is Covered

HIPAA applies to three categories of entities:

Covered Entities: Health plans, healthcare clearinghouses, and healthcare providers who electronically transmit health information. In the Indian context, this includes any Indian hospital, clinic, or health IT company that provides services to US-based covered entities.

Business Associates: Any person or organization that creates, receives, maintains, or transmits Protected Health Information on behalf of a covered entity. This is the category that catches most Indian healthcare AI companies off guard. If your company:

- Provides telemedicine software used by US hospitals

- Develops AI diagnostic tools processing US patient data

- Handles medical billing or coding for US clients

- Provides cloud storage where US patient records are held

- Offers RPM (Remote Patient Monitoring) services that include US patients

Then your company is a Business Associate under HIPAA and must comply with its full requirements, including signing a Business Associate Agreement (BAA) with every covered entity you work with.

What counts as PHI in the context of AI? HIPAA defines 18 categories of Protected Health Information, and the definition is broader than most AI developers assume. A combination of a ZIP code, date of birth, and a diagnosis code can be enough to constitute PHI even without a patient’s name. If your AI model was trained on or processes any of these combinations, you are handling PHI.

DPDP Act: Who Is Covered

The DPDP Act, 2023, with its Rules notified on November 14, 2025, applies to:

Data Fiduciaries: Any entity, individual, or company that determines the purpose and means of processing personal data. Most healthcare AI companies are Data Fiduciaries.

Data Processors: Any entity that processes personal data on behalf of a Data Fiduciary. Your cloud provider, your AI model vendor, and your data labeling company are typically Data Processors.

Extraterritorial Reach: Like HIPAA, the DPDP Act extends beyond India’s borders. Any company, regardless of its headquarters, that processes personal data in connection with offering goods or services to individuals in India must comply. This means a US healthtech company serving Indian patients through a telemedicine platform is subject to the DPDP Act requirements.

Significant Data Fiduciaries (SDFs): The government has the power to designate certain entities as Significant Data Fiduciaries based on the volume and sensitivity of data they process. SDFs face additional obligations, including appointing a Data Protection Officer based in India, conducting Data Protection Impact Assessments, and appointing an independent data auditor. Healthcare AI platforms processing large volumes of health data are prime candidates for SDF designation.

The Compliance Timeline You Cannot Ignore

As of March 2026, the DPDP Rules are active, and the 18-month compliance clock is running. Full enforcement is expected by May 13, 2027. This is not a soft deadline; the Data Protection Board of India is operational and building enforcement capacity. Healthcare companies must treat 2026 as the execution year.

Definitions – What Data Is Covered

The most consequential difference between HIPAA and the DPDP Act is how they define the health data they protect.

HIPAA: Protected Health Information (PHI)

PHI is any individually identifiable health information – in any format, including paper, electronic, and oral – held or transmitted by a covered entity or business associate.

HIPAA’s 18 PHI identifiers include names, geographic data smaller than state level, dates (birth, admission, discharge), phone numbers, email addresses, medical record numbers, health plan numbers, account numbers, certificate numbers, IP addresses, device identifiers, biometric identifiers (including voice prints), and photographs.

The critical AI implication: HIPAA’s “Safe Harbor” de-identification method requires the removal of all 18 identifiers before data can be used without PHI restrictions. The “Expert Determination” method allows a statistical expert to certify that re-identification risk is very small. Neither standard is easy to meet for AI model training datasets, and most teams discover compliance gaps only after training is complete.

DPDP Act: Personal Data and Sensitive Personal Data

Under the DPDP Act, health data is classified as Sensitive Personal Data (SPD) – a category requiring enhanced protection.

The Act defines personal data as any data about an individual who is identifiable by or in relation to such data. Unlike HIPAA’s exhaustive 18-identifier list, the DPDP Act uses a broader definitional approach: if data can directly or indirectly identify an individual, it is personal data.

Health information – including diagnosis records, prescription history, lab results, biometric measurements, and mental health data – is explicitly classified as sensitive personal data under the DPDP Rules 2025.

Key difference for AI development: HIPAA has a defined de-identification pathway with legal safe harbors. The DPDP Act does not yet have equivalent de-identification standards for AI training. This creates a compliance gap: data that has been “HIPAA de-identified” may not meet DPDP Act standards, because the DPDP Act’s anonymization definition is stricter in some respects. For healthcare AI companies building training datasets, legal review of both standards is essential before training begins.

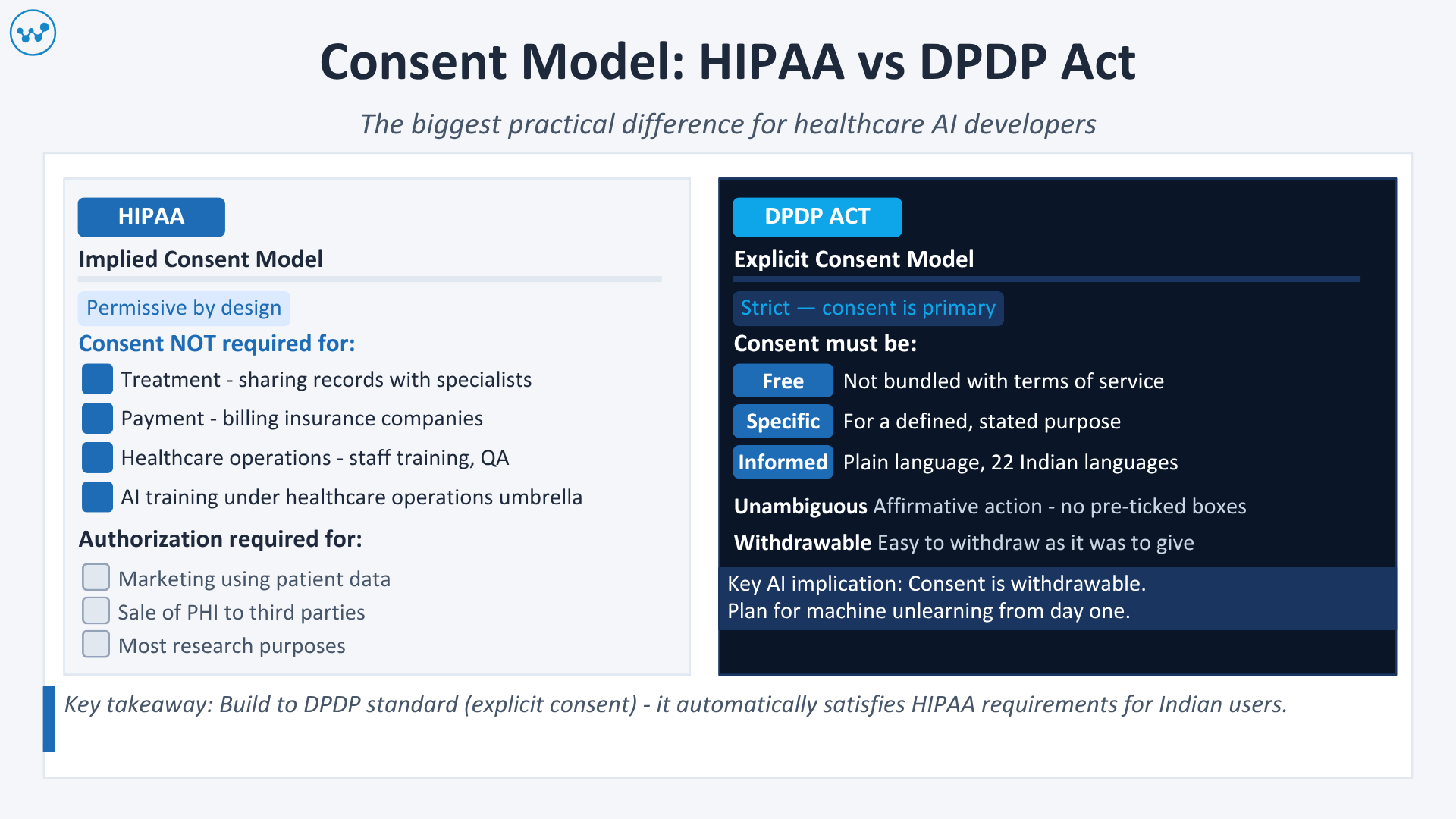

Consent – The Biggest Practical Difference

This is where the two frameworks diverge most sharply, and where most healthcare AI companies run into problems.

HIPAA: Implied Consent for Treatment Purposes

HIPAA’s consent model is permissive by design. It was built for a healthcare system where sharing patient data for treatment, payment, and healthcare operations is assumed to be in the patient’s interest.

Under HIPAA, covered entities can use and disclose PHI without explicit patient consent for:

- Treatment (sharing records with a specialist)

- Payment (billing an insurance company)

- Healthcare operations (quality improvement, staff training)

HIPAA requires patient authorization only for specific uses beyond these three – such as sharing data for marketing, selling PHI, or most research purposes.

AI implication: Under HIPAA, you can use a patient’s medical record to train a clinical quality improvement AI model without obtaining explicit patient consent, provided you meet the “minimum necessary” standard and the use qualifies as a healthcare operation. However, if you are licensing that AI model’s outputs to a third party, or if training data is shared with your AI vendor without a BAA, HIPAA is violated.

DPDP Act: Explicit, Granular, Withdrawable Consent

The DPDP Act operates on the opposite principle. Consent is the primary lawful basis for processing personal data, and that consent must be:

- Free – not bundled with terms of service or made a condition of service

- Specific – for a defined purpose

- Informed – clearly communicated in plain language in English or one of the 22 scheduled languages

- Unconditional – not coerced

- Unambiguous – an affirmative action, not a pre-checked box

Critically, consent under the DPDP Act is withdrawable at any time, and withdrawal must be as easy as the original consent.

AI implication: This creates a direct conflict with standard AI training workflows. If a patient withdraws consent, the DPDP Act requires deletion of their personal data. But if that data was already used to train a model, removing its influence from the trained model (sometimes called “machine unlearning”) is technically complex and computationally expensive. Healthcare AI companies operating under the DPDP Act must plan for this from the architecture stage – not as an afterthought.

Consent for Children’s Health Data

Both frameworks have child data protections, but they work differently:

HIPAA: Minors’ health data is generally controlled by parents or guardians, with exceptions for certain sensitive conditions (mental health, reproductive health, substance abuse).

DPDP Act: Organizations must obtain verifiable parental consent before processing any personal data of a child (defined as anyone under 18). Processing that could harm a child is absolutely prohibited. Behavioral monitoring and targeted advertising directed at children are banned outright – with no exceptions.

For healthcare AI platforms that serve pediatric populations, the DPDP Act’s child data requirements are significantly more demanding than HIPAA’s.

Third-Party Vendors and AI APIs – The BAA vs Data Processing Agreement

HIPAA: Business Associate Agreements (BAA)

Every third-party vendor that touches PHI must sign a Business Associate Agreement with your organization. Under HIPAA, if your AI vendor processes patient data without a BAA, you are in violation – even if their platform is technically secure.

The AI vendor BAA crisis: Most generic AI APIs – including standard versions of OpenAI’s GPT API, Google’s Gemini API, and Anthropic’s Claude API – are not covered by default BAAs. Sending patient data to these APIs without a HIPAA-eligible enterprise agreement is a violation. Healthcare companies must either:

- Use HIPAA-eligible enterprise versions of these APIs (which require separate agreements and often higher pricing)

- De-identify all data before it touches any AI API not covered by a BAA

- Build or fine-tune their own models in environments where PHI does not leave their HIPAA-compliant infrastructure

Key BAA requirement for AI systems: Every entity in your AI supply chain – your model provider, your cloud inference provider, your data labeling company, your model monitoring tool – must have a signed BAA if it touches PHI. A single missing BAA is a compliance violation.

DPDP Act: Data Processing Agreements

The DPDP Act requires Data Fiduciaries to ensure that their Data Processors (vendors) process personal data only as instructed and implement appropriate security safeguards. This obligation must be formalized through a contractual agreement – analogous to a BAA under HIPAA.

The DPDP Act goes further in one important respect: Data Fiduciaries cannot contract out their liability. If your AI vendor mishandles patient data, you remain responsible to the Data Protection Board.

Practical implication for Indian healthcare AI companies: You need both. If you are handling US patient data through any US-facing service, you need BAAs. If you are handling Indian patient data, you need DPDP-compliant data processing agreements. When both apply simultaneously, the stricter obligation governs each specific element.

Data Localization – The Most Misunderstood Requirement

HIPAA imposes no geographic restrictions on where data is stored or processed, provided:

- The entity storing or processing the data has signed a BAA

- The data is encrypted at rest (AES-256) and in transit (TLS 1.2 minimum)

- The cloud infrastructure is HIPAA-eligible (AWS GovCloud, Azure Government, or equivalent)

This is why US healthcare companies can and do store patient data in data centers across multiple countries.

DPDP Act: Conditional Localization Framework

This is one of the most frequently misunderstood aspects of the DPDP Act.

The final DPDP Rules 2025 confirmed a blacklist-based approach – not a blanket data localization mandate. Under this framework:

- Cross-border transfers are allowed by default

- The government can issue notifications restricting transfers to specific countries that it deems inadequate for data protection

- No countries have been blacklisted as of March 2026

However, the Act grants the government broad power to mandate data localization for specific categories of data or for Significant Data Fiduciaries. The rules leave room for sector-specific localization requirements to be introduced, and healthcare data, given its sensitivity, is a likely target.

What this means for healthcare AI right now: There is no current data localization mandate for most healthcare data. But organizations should architect their systems with localization flexibility, meaning the ability to switch patient data storage to an India-based infrastructure without rebuilding their entire data pipeline. AWS, Azure, and Google Cloud all have India-based data centers; using them now is lower cost and lower risk than retrofitting later.

Special consideration for ABDM-integrated systems: If your platform integrates with the Ayushman Bharat Digital Mission (ABDM) or handles data from the Ayushman Bharat Health Account (ABHA), additional data governance requirements apply under the ABDM’s Health Data Management Policy. This policy requires health data to be stored within India and processed under consent obtained through the ABHA framework.

Breach Notification – Speed and Scope

HIPAA: 60-Day Notification Window

HIPAA’s Breach Notification Rule requires:

- Notification to affected individuals within 60 days of discovery

- Notification to the Department of Health and Human Services (HHS) within 60 days (or annual reporting for breaches affecting fewer than 500 individuals)

- Notification to prominent media outlets for breaches affecting more than 500 residents of a state or jurisdiction

- Documentation of all breaches, including those deemed not reportable

The 60-day window is the longest of any major global privacy regulation — and even so, many organizations struggle to meet it.

DPDP Act: 72-Hour Notification Requirement

The DPDP Act’s breach notification requirement is significantly more demanding. Data Fiduciaries must notify both the Data Protection Board of India and affected Data Principals (patients) within 72 hours of becoming aware of a personal data breach.

This 72-hour window matches GDPR’s requirement and is one of the strictest in the world. For healthcare AI organizations, this means breach detection and response infrastructure must be built to notify in under three days – not sixty.

What constitutes a breach under each framework:

| Scenario | HIPAA | DPDP Act |

| AI model outputs PHI/SPD inadvertently | Breach – notify | Breach – notify within 72 hours |

| Unauthorized access by internal staff | Breach – investigate and likely notify | Breach – notify within 72 hours |

| Third-party vendor without BAA/DPA processes data | HIPAA violation (not a breach per se) | Non-compliance; notify if data was accessed |

| Encrypted device lost with no access | Not a breach if encryption meets standards | Assess risk; may still require notification |

| Prompt injection attack exposing PHI via an AI chatbot | Breach | Breach – notify within 72 hours |

Healthcare AI companies should assume that their 72-hour DPDP obligation is the binding constraint and build breach response workflows accordingly.

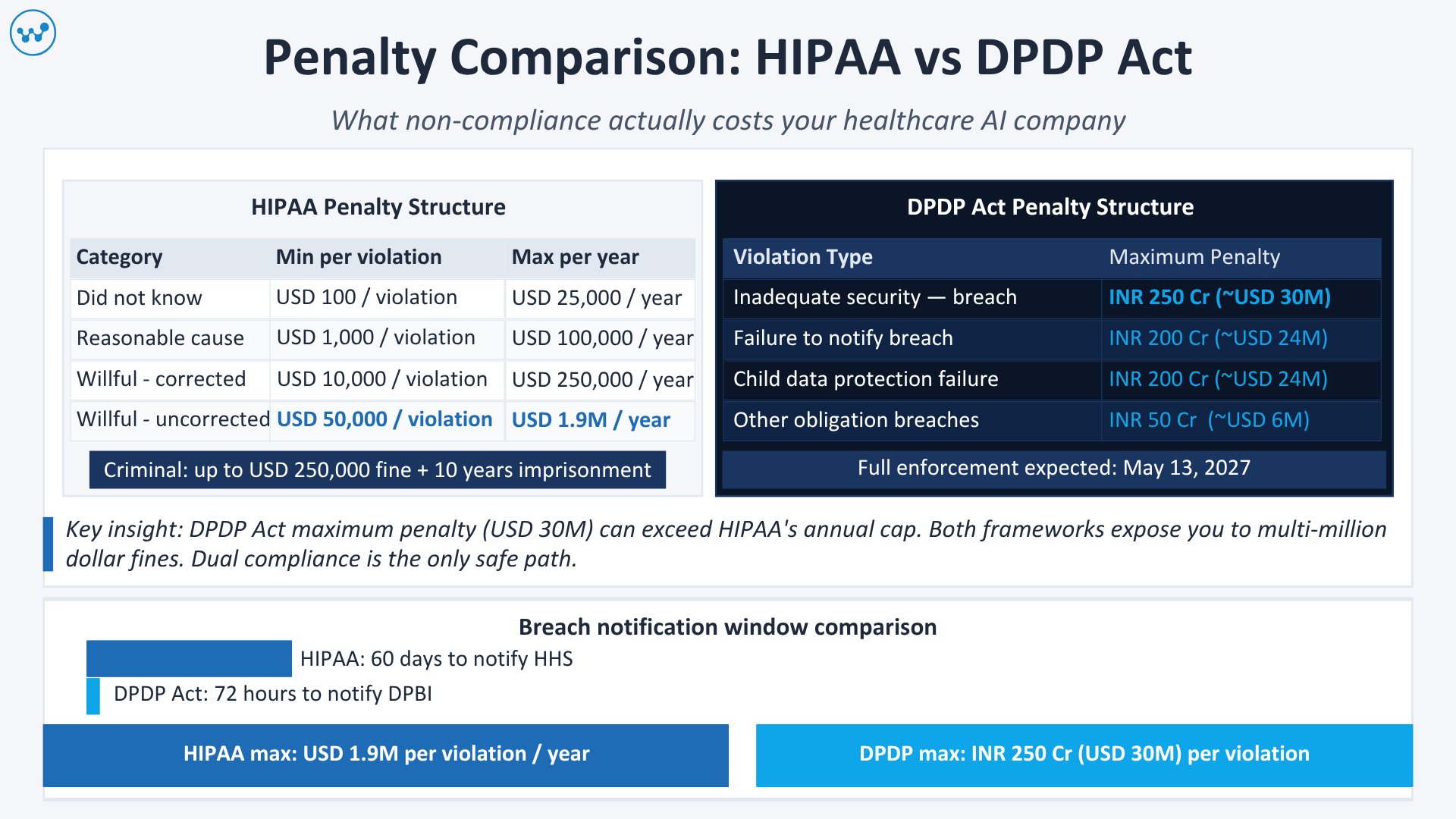

Penalties – What Non-Compliance Actually Costs

HIPAA Penalty Structure (2024 Tiered Framework)

| Violation Category | Minimum Penalty | Maximum Per Year |

| Did not know about the violation | USD 100 per violation | USD 25,000 |

| Reasonable cause | USD 1,000 per violation | USD 100,000 |

| Willful neglect (corrected) | USD 10,000 per violation | USD 250,000 |

| Willful neglect (not corrected) | USD 50,000 per violation | USD 1.9 million |

In practice, the Department of Justice can pursue criminal prosecution for knowing violations, with fines up to USD 250,000 and imprisonment up to 10 years.

AI-specific enforcement trend (2024-2025): OCR has shown a clear pattern of prioritizing enforcement against organizations that use AI systems without proper BAAs, particularly those using third-party AI APIs to process PHI. The 2024 case of a mid-sized hospital network paying USD 4.75 million for using an AI vendor without a BAA is now cited in virtually every healthcare compliance training.

DPDP Act Penalty Structure

| Violation | Maximum Penalty |

| Inadequate security safeguards leading to a breach | INR 250 crore (~USD 30 million) |

| Failure to notify the breach to the Board or Data Principals | INR 200 crore (~USD 24 million) |

| Non-compliance with child data protection provisions | INR 200 crore (~USD 24 million) |

| Non-compliance with other obligations | INR 50 crore (~USD 6 million) per violation |

DPDP Act enforcement timeline: As of March 2026, the Data Protection Board is operationalizing its infrastructure. Enforcement has not yet commenced at scale, but the Board is building capacity. The compliance window until May 2027 is not a grace period – organizations should use this time to build compliant architecture, not to delay.

AI-Specific Obligations Under Each Framework

This is the section that most compliance guides miss entirely. Both HIPAA and the DPDP Act have specific implications for AI systems that go beyond general data protection requirements.

AI Under HIPAA: The Four Non-Negotiables

- Audit trails for every AI action: HIPAA’s Security Rule requires covered entities to maintain audit controls that record and examine activity in systems containing ePHI. For AI systems, this means logging every inference, every data access, every model update, and every API call that touches PHI. These logs must be tamper-evident and retained for at least six years.

- Minimum necessary standard applied to AI inputs: An AI model should only receive the minimum PHI necessary to perform its clinical function. If your symptom triage AI only needs age, presenting complaint, and vital signs, feeding it full medical records is a HIPAA violation.

- De-identification before AI training: Training data used for AI model development must either meet HIPAA’s Safe Harbor or Expert Determination de-identification standards, or be covered by explicit patient authorization.

- BAA for every AI vendor in the chain: Your AI model provider, inference API, vector database, monitoring tool, and any other system that touches PHI must have a signed BAA.

AI Under DPDP Act: Emerging Obligations

The DPDP Act does not have AI-specific provisions equivalent to HIPAA’s detailed Security Rule. However, the Rules 2025 introduce meaningful AI governance obligations, particularly for Significant Data Fiduciaries:

Algorithmic compliance: SDFs must ensure that any algorithmic software used for data processing respects the rights of Data Principals. In practice, this means:

- AI systems must not make decisions that infringe on patient rights without human oversight

- AI-driven denial of health services or insurance requires explainability and appeal mechanisms

- Patients must be able to correct inaccurate data that feeds into AI decisions affecting them

Consent for automated decision-making: Unlike GDPR (which has explicit Article 22 protections against automated decisions), the DPDP Act does not directly prohibit automated decision-making. However, the consent framework means patients must have consented to automated processing, and must be able to withdraw that consent.

Right to erasure and AI unlearning: If a patient withdraws consent, their personal data must be erased. For AI systems trained on that data, organizations must have a mechanism to handle erasure requests – even for data already used in model training. This is technically challenging and must be planned for in advance.

The Dual Compliance Architecture – How to Satisfy Both Simultaneously

Here is the practical framework for Indian healthcare AI companies that must comply with both HIPAA and the DPDP Act. The goal is a single architecture that satisfies both without building two separate systems.

Foundational Principle: Build to the Stricter Standard

For every compliance dimension, identify the stricter requirement and build to that standard:

| Dimension | Stricter Standard | Rationale |

| Consent | DPDP Act (explicit, granular) | HIPAA’s implied consent model does not satisfy the DPDP Act requirements |

| Breach notification | DPDP Act (72 hours) | HIPAA’s 60-day window is more permissive |

| Audit logging | HIPAA (6-year retention, detailed) | DPDP Act does not specify retention periods for audit logs |

| Vendor agreements | Both required | BAA for US-related data; DPA for India-related data |

| Child data | DPDP Act (verifiable consent, broader restrictions) | DPDP Act is stricter for most pediatric health scenarios |

| AI transparency | DPDP Act (SDF obligations) | HIPAA has no equivalent algorithmic explainability requirement |

The Seven-Layer Dual Compliance Stack

Layer 1: Consent Infrastructure

Build a consent management platform that captures explicit, granular, withdrawable consent in 22 scheduled Indian languages (DPDP) while also tracking patient authorizations required under HIPAA. These can be unified in a single system with jurisdiction flags.

Layer 2: Data Classification and Tagging

Every data element must be tagged with its jurisdiction (US-origin PHI, India-origin SPD, or both), sensitivity level, consent status, and retention period. This metadata layer is what makes dual compliance manageable at scale.

Layer 3: De-identification Pipeline

Build a dual-standard de-identification pipeline that satisfies both HIPAA’s 18-identifier Safe Harbor method and DPDP Act anonymization requirements. For AI training datasets, run data through both standards before model training begins.

Layer 4: Vendor Compliance Matrix

Maintain a compliance matrix for every vendor in your AI supply chain, tracking whether they have signed BAAs (for HIPAA) and DPAs (for DPDP). No vendor should touch patient data without both agreements in place if your platform handles both US and Indian patients.

Layer 5: Infrastructure

- For US patient data: Use HIPAA-eligible cloud infrastructure (AWS GovCloud, Azure Government, or equivalent)

- For Indian patient data: Use India-based data centers (AWS Mumbai, Azure India Central, GCP Mumbai) with DPDP-compliant configurations

- For combined workloads: Implement data residency controls that route data to the correct regional infrastructure based on jurisdiction tags

Layer 6: Breach Response

Build your breach response workflow to the 72-hour DPDP standard. A workflow that notifies within 72 hours automatically satisfies HIPAA’s 60-day requirement. Your breach response plan must cover: detection, assessment, notification drafting, regulator notification, and patient/individual notification.

Layer 7: Audit and Documentation

Maintain separate documentation trails for each regulatory framework:

- HIPAA: Risk assessments, BAAs, training records, audit logs (6-year retention)

- DPDP Act: Consent records, processing logs, breach notifications, DPAs, DPIA reports (for SDFs)

India-Specific Context – What Global Guides Miss

Most HIPAA vs DPDP comparisons are written from a global compliance perspective and miss the nuances that matter specifically to Indian healthcare AI companies.

The ABDM Intersection

India’s Ayushman Bharat Digital Mission creates a third layer of compliance for healthcare AI platforms that integrate with India’s national digital health infrastructure. The ABDM’s Health Data Management Policy (HDMP) requires:

- Health data generated under ABDM must be stored within India

- Patient consent for ABDM data must be obtained through the ABHA consent framework

- Health data cannot be used for commercial purposes without explicit patient consent

If your platform connects to ABHA (Ayushman Bharat Health Account) IDs or exchanges data through the Health Information Exchange under ABDM, HDMP compliance is mandatory alongside DPDP Act compliance.

The IT Act Legacy Layer

Until the DPDP Act supersedes them, the Information Technology (Reasonable Security Practices and Procedures and Sensitive Personal Data or Information) Rules, 2011 continue to apply for an additional 18 months after the DPDP Rules’ notification. Healthcare companies must currently comply with both the SPDI Rules and the DPDP Act – and transition their compliance programs accordingly.

DISHA: The Healthcare-Specific Framework on the Horizon

India’s proposed Digital Information Security in Healthcare Act (DISHA), while still in draft form, is expected to become sector-specific healthcare data legislation analogous to HIPAA. DISHA will likely impose HIPAA-like requirements specifically for health data, which the DPDP Act, as a general data protection law, does not fully address.

Healthcare AI companies should architect their compliance programs with DISHA in mind. Building HIPAA-grade controls now – including PHI-specific audit trails, BAA-equivalent agreements for health data processors, and de-identification standards – positions you for DISHA compliance without a rebuild.

Also Read: HIPAA-Compliant AI Software Development: The Complete Guide for Healthcare Companies in 2026

HIPAA vs DPDP Act: Compliance Checklist for Healthcare AI Companies in India

Before Development Starts

- Map all data flows – identify which data is US-origin PHI, which is India-origin SPD, and which is both

- Classify your company’s role: Covered Entity/Business Associate (HIPAA) and Data Fiduciary/Data Processor (DPDP)

- Assess whether you will be designated a Significant Data Fiduciary under DPDP

- Execute BAAs with all US-facing AI vendors

- Execute DPAs with all India-facing data processors

- Design your consent architecture to meet DPDP’s explicit, granular standard

During Development

- Implement dual-standard de-identification for all training datasets

- Build jurisdiction tagging into your data pipeline from day one

- Configure regional data routing – US patient data to HIPAA-eligible infrastructure, Indian patient data to India-based data centers

- Implement audit logging meeting HIPAA’s 6-year retention standard

- Build consent withdrawal and data erasure workflows before launch

- Document your minimum necessary analysis for every AI model input

Before Launch

- Conduct HIPAA Security Risk Assessment

- If applicable, conduct Data Protection Impact Assessment (DPIA) under DPDP

- Execute penetration testing by a qualified healthcare security firm

- Verify all BAAs and DPAs are current and cover your actual data flows

- Test your 72-hour breach notification workflow

- Train your team on both HIPAA and DPDP obligations

Post-Launch and Ongoing

- Monitor DPDP enforcement notifications – particularly any data localization mandates

- Monitor OCR enforcement actions – AI-specific enforcement is intensifying

- Update BAAs and DPAs when adding new AI vendors

- Conduct annual HIPAA risk assessments

- Conduct DPDP compliance audits as DPIA requirements come into force

- Track DISHA developments – prepare for sector-specific healthcare data legislation

Conclusion

The question is not which framework is harder. It is whether you are building healthcare AI that will still be running in 2028, when both HIPAA enforcement and DPDP Act enforcement are in full swing.

The good news: dual compliance is achievable without doubling your compliance costs, if you build the right architecture from the start. The dual compliance stack described in this guide is not two systems – it is one system with jurisdiction awareness built in.

The two frameworks have more in common than they differ. Both require strong data security. Both require vendor accountability through written agreements. Both require breach notification. Both require purpose limitation. Building to the stricter standard on each dimension – DPDP for consent and breach speed, HIPAA for audit trails and de-identification – gives you a platform that satisfies both without architectural compromise.

For Indian healthcare AI companies with global ambitions, dual HIPAA-DPDP compliance is not a compliance burden. It is a competitive advantage. Hospitals and health systems in the US, the UAE, and Southeast Asia increasingly require their AI vendors to demonstrate HIPAA compliance as a baseline. Adding DPDP compliance positions you as a company that takes data governance seriously – and in healthcare, that trust is the product.

If you are building a healthcare AI platform that must comply with both HIPAA and the DPDP Act, Webkorps can help. Our team has deep experience in healthcare compliance architecture – we build compliance in from day one, not as a retrofit. Get in touch for a free compliance scoping consultation.

Frequently Asked Questions

If I am only serving Indian patients and have no US clients, do I need to worry about HIPAA?

If your platform has no US patient data and no contractual relationships with US covered entities, HIPAA does not apply. However, as soon as you take on a client that serves US patients – even partially – you become a Business Associate under HIPAA for that relationship and must comply with the data you handle for them.

Does de-identifying data under HIPAA’s Safe Harbor method automatically satisfy DPDP Act anonymization requirements?

Not necessarily. HIPAA’s Safe Harbor de-identification removes 18 specific identifiers. The DPDP Act uses a broader definition of identifiability – if data can directly or indirectly identify an individual, it is personal data. In practice, data that passes HIPAA Safe Harbor may still be considered personal data under the DPDP Act if contextual re-identification is possible. Always have both standards reviewed by legal counsel familiar with both frameworks.

The DPDP Act requires explicit consent, but HIPAA allows implied consent for treatment purposes. How do I reconcile this for a telemedicine platform serving both US and Indian patients?

Operate at the DPDP Act’s standard for all users. Explicit, granular consent satisfies both frameworks. It is a more demanding operationally, but building to HIPAA’s implied consent standard would leave you non-compliant with DPDP for Indian users.

Is there currently a data localization requirement for health data under the DPDP Act?

No blanket localization requirement exists as of March 2026. However, ABDM-integrated health data must be stored in India under the Health Data Management Policy. For platforms not integrated with ABDM, cross-border transfers are currently permitted by default. Monitor government notifications closely – localization requirements can be introduced at any time through notification.

Can I use the same AI model for both US and Indian patients, or do I need separate models?

You can use the same AI model, provided:

- The training data was properly de-identified under both frameworks.

- Your inference pipeline routes each request through the appropriate regional infrastructure.

- Audit logging captures jurisdiction-specific metadata

- Your consent architecture distinguishes between US-applicable authorization and DPDP-compliant consent.

What happens if the DPDP Act introduces mandatory data localization for health data after I have built my infrastructure on a US-based cloud?

This is exactly the risk that makes proactive architecture planning essential. Organizations that use India-based data centers for Indian patient data now – even without a localization mandate – face zero migration risk if localization is introduced. Organizations using US-based infrastructure for Indian data face a potentially expensive and disruptive migration.