The key to AI success lies not in the technology itself, but in the systematic approach to implementation that addresses business needs, organizational readiness, and human factors equally.

AI implementation reshapes today’s enterprises, yet most organizations struggle to succeed with it. Business data shows that while 80% of enterprises pursue AI initiatives, only 30% manage to deploy AI at scale and see measurable business results.

The push toward AI keeps gaining momentum despite these hurdles. McKinsey’s 2024 survey reveals that 65% of organizations now use generative AI regularly. This quick adoption creates an urgent need for a well-laid-out AI strategy roadmap. Gartner’s research proves that companies achieve much better results when they use structured AI implementation methods instead of treating AI like regular software development.

Business success with AI needs more than just the right technology. Though 80% of companies now use generative AI, 90% of IT experts need better visibility into their organization’s AI usage. The real problem shows in the numbers – just 14% have the oversight they need. This lack of control brings major risks, especially since 75% of IT security teams report their companies face serious threats from AI-powered attacks.

This piece will help you direct your path through AI implementation with confidence. Our 12 proven steps offer a practical framework to define clear objectives and measure ROI that leads to enterprise AI success.

Table of Contents

Define Clear Business Objectives

Clear business objectives are the foundations of any successful AI implementation. A shocking 95% of AI efforts fail because organizations don’t have well-defined goals. Companies that succeed with AI-powered LMS follow a simple rule: they set precise, measurable targets before picking any technology.

Business objectives for AI implementation

Your organization needs to spot specific problems or opportunities that AI can solve. Ask yourself:

- What inefficiencies need solving?

- How can AI improve customer experiences?

- Which decision-making processes could benefit from automation?

These challenges should become concrete objectives, like boosting operational efficiency by a set percentage or cutting customer service response times. Gartner suggests using “the AI adoption curve to identify and achieve your goals for activities that increase AI value creation by solving business problems better, faster, at lower cost and with greater convenience”.

Arranging AI with enterprise goals

Your AI initiatives must match your high-level business priorities to succeed. This connection will give a clear path for AI solutions to push your strategy forward, rather than just adding technology without purpose.

Good KPIs help track progress and show value clearly. The best KPIs for AI should be specific, measurable, tied to business goals, and time-bound. Companies that set careful AI performance metrics are 1.7 times more likely to reach their AI goals.

Avoiding tech-first pitfalls

Many companies make a crucial mistake: they let technology drive decisions instead of business needs. Leaders who put business first tend to succeed, while those focused on tech often fail. The successful 5% of organizations focus on outcomes, experiences, and the human systems that technology must serve.

So, pick your first project with care, since most AI projects don’t launch as planned. Start small with three to six use cases that show measurable benefits for specific business areas. Companies that skip this step “automate dysfunction instead of improving it”.

Your AI strategy roadmap should balance quick wins with bigger bets. Move beyond scattered projects to create an all-encompassing approach that tackles critical business problems.

Assess Organizational Readiness

Image Source: Ali Arsanjani – Medium

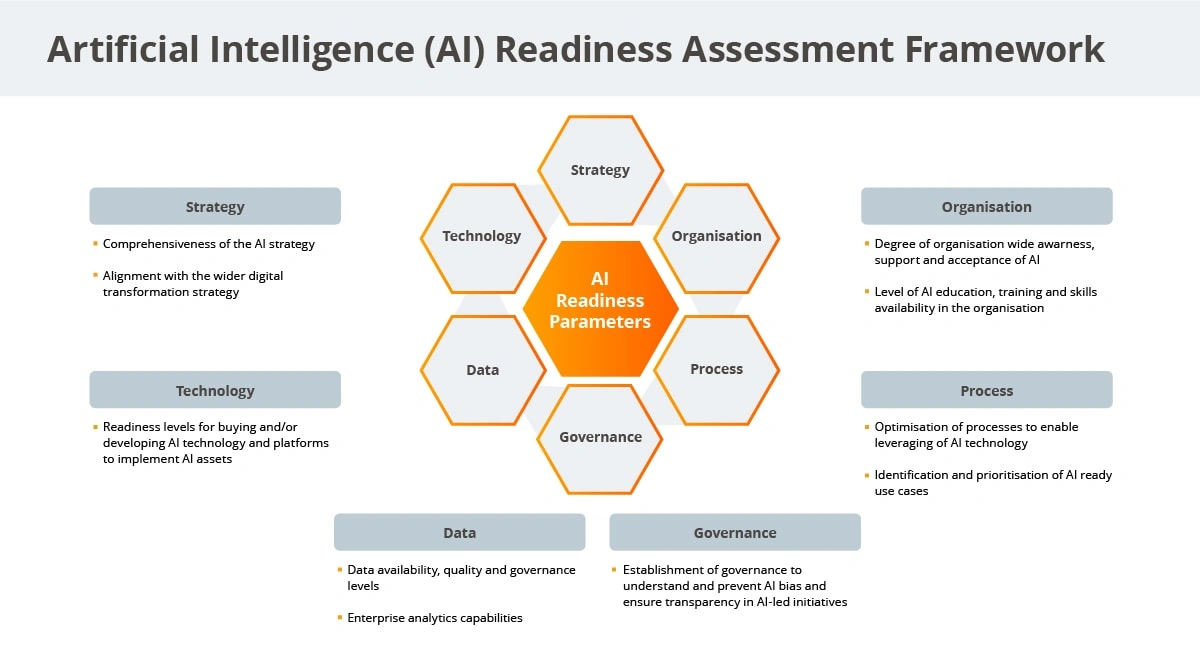

Your organization needs clear business goals before you can assess whether it can deliver on AI ambitions. A full picture creates common ground about your current position and what you need to close any gaps.

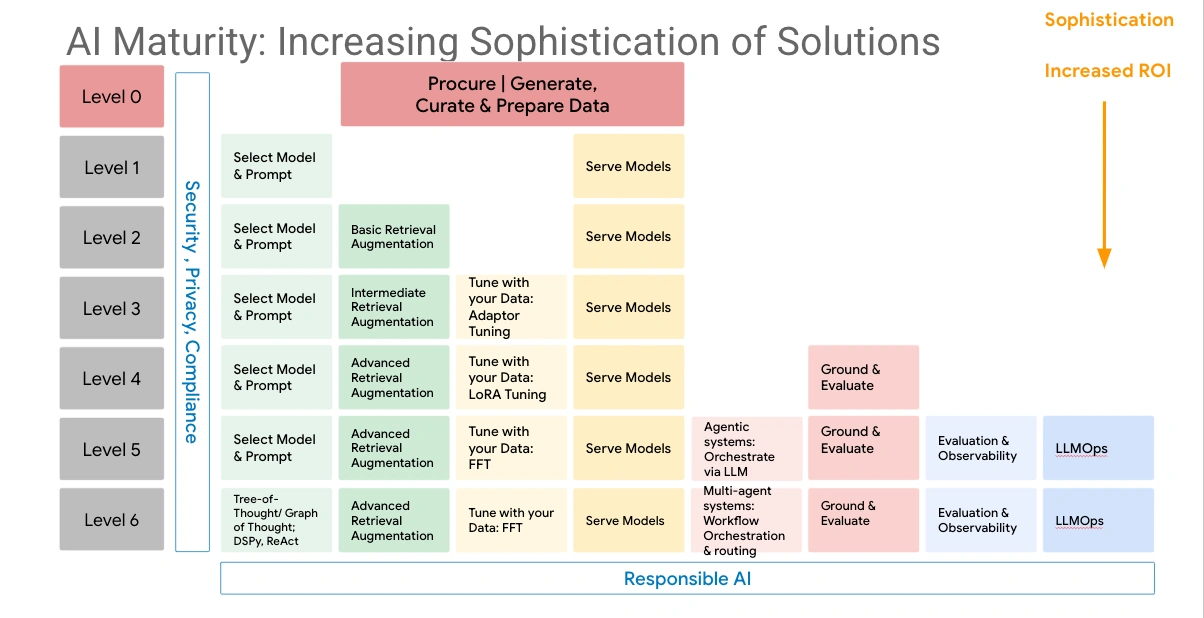

Evaluating current AI maturity

You need to know your current AI maturity level. MIT research shows organizations with advanced AI maturity perform better financially than their industry peers. AI leaders see 3.8 times better performance improvements compared to those in the bottom half. Most companies fit into one of four maturity stages:

- Experiment and Prepare (28% of enterprises) – Building AI literacy and identifying value opportunities

- Build Pilots and Capabilities (34%) – Running controlled pilots and developing core competencies

- Industrialize AI (31%) – Scaling solutions with measurable results

- AI Future-Ready (7%) – Embedding AI in all decision-making processes

A detailed AI readiness assessment should measure readiness in seven key areas: Business Strategy, AI Governance & Security, Data Foundations, AI Strategy & Experience, Organization & Culture, Infrastructure for AI, and Model Management. This review reveals strengths and areas to improve by looking at leadership vision, investment priorities, and how well systems work together.

Identifying capability gaps

Teams don’t deal very well with skills gaps that block successful AI implementation, according to half of all professionals. These gaps exist in both technical abilities and soft skills needed for AI adoption.

Johnson & Johnson uses “skills inference” to find these gaps. They use AI to analyze employee data to calculate skills proficiency. Their approach has three steps:

- Creating a skills taxonomy for future-ready capabilities

- Selecting appropriate sources of employee data

- Using AI to measure proficiency levels against required skills

Companies should make it clear that skills insights won’t affect performance reviews. These insights help with strategic workforce planning and development. Johnson & Johnson saw 20% more people join professional development programs after using skills inference.

Building executive sponsorship

Executive support makes the difference between AI leaders and followers. Leading AI companies have CEO or board-level support 44% of the time – twice the rate of lower performers. AI leadership has moved up from middle management to the C-suite and board levels.

Getting and keeping executive support needs visibility, reinforcement, and clear value demonstration. A technology director put it well: “Trust can be hard to win, but is easy to lose”. Successful AI projects need many people working together – business executives who care about results, analysts who understand data patterns, and IT managers who keep systems running.

The best results come from a well-laid-out governance approach. Regular steering committees keep key stakeholders informed and aligned. This shared, results-focused strategy builds trust and ensures active support for current and future AI projects.

Identify and Prioritize AI Use Cases

Organizations have clear goals and readiness checks in place. Now it’s time to find where AI can add the most value. Research shows that all but one of these organizations haven’t started scaling AI across their enterprise. This makes finding the right use cases a significant competitive advantage.

Use case discovery workshops

AI Use Case Discovery Workshops help teams uncover high-value opportunities. Field engineering teams lead these collaborative sessions to guide participants through several key steps:

- Mapping business context and defining success criteria

- Finding pain points where AI can make meaningful changes

- Looking at current processes to spot areas for improvement

- Understanding data needs for potential AI solutions

Teams walk away with a priority list of use cases and a detailed report. The report outlines the best opportunities, checks if they’re doable, lists needed integrations, and gives next steps. This well-laid-out process helps teams see ROI faster by targeting the perfect scenarios for AI.

Evaluating business impact and feasibility

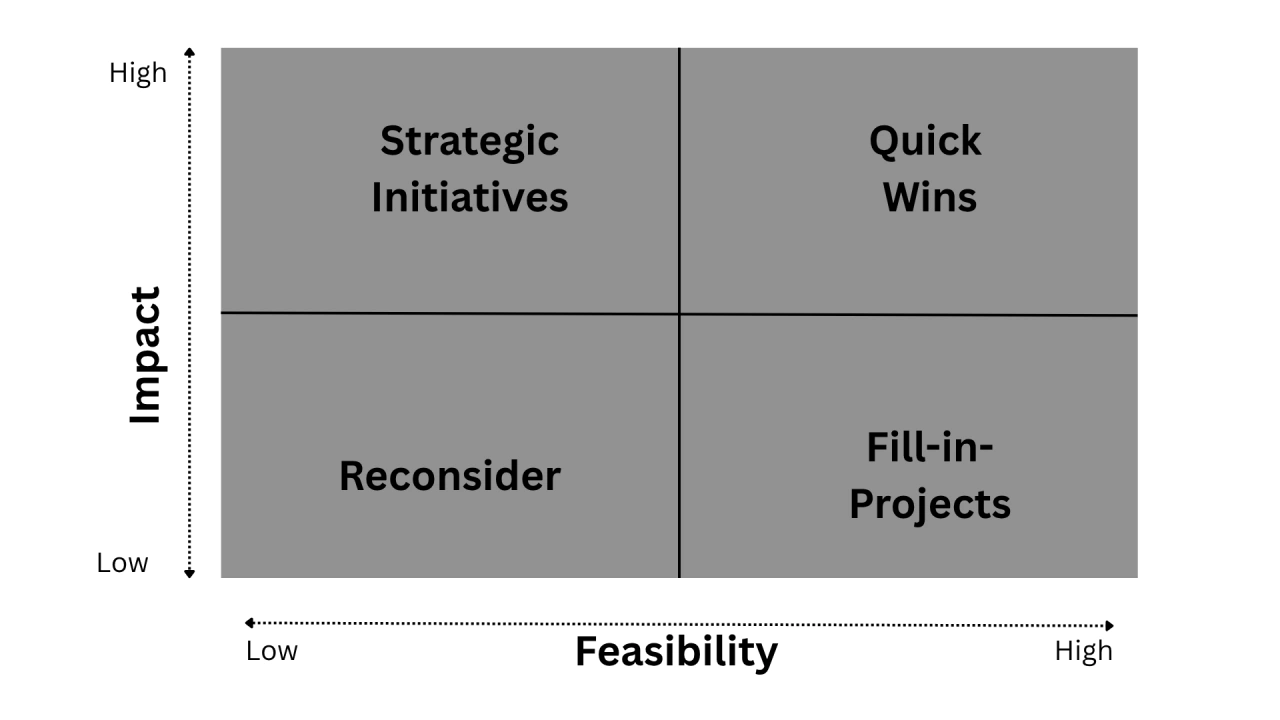

Teams need a clear framework to evaluate potential use cases. An Impact/Effort assessment helps rank initiatives:

- High ROI Focus (High Impact/Low Effort): Quick wins build momentum – these make great starting points

- High-Value/High-Effort: Game-changing projects that need more planning and resources

- Self-Service (Low Impact/Low Effort): Solutions that teams can scale across departments

- High-Effort/Low-Impact: Projects to save for later or rethink

On top of that, a full evaluation should look at strategic fit, business value, technical feasibility, and timeline. Top performers put over 80% of their AI investments into a few high-value projects. This focus helps teams use resources where they matter most.

Creating a balanced AI portfolio

Smart AI implementation needs a portfolio approach rather than scattered, one-off projects. Teams should look at their connected portfolio in two ways:

The first view shows a progress pipeline with clear checkpoints. The second shows a mix of projects across different impact levels and timelines. This approach stops the common problem of “too many pilots with too little coordinated oversight”.

A smart AI portfolio mixes quick wins for fast results with strategic bets that could reshape operations. This balanced approach keeps momentum going while building toward bigger goals as teams get better.

Evaluate Data Availability and Quality

Image Source: Future Processing

Data is the lifeblood of every AI initiative. U.S. businesses lose over USD 3 trillion annually due to poor data quality, while organizations face USD 15 million yearly losses from flawed data. Poor data quality remains one of the main reasons AI initiatives fail.

Assessing data sources

Let’s take a closer look at your data ecosystem. You need to review internal sources (CRM systems, transaction logs), external sources (third-party databases, market research), and public sources (government databases, open datasets). You should think about whether your current data represents all use cases your AI system will encounter.

Note that 91% of IT decision-makers believe their organizations need better data quality. This concern makes sense since even a small amount of low-quality data can significantly impact AI performance.

Data cleaning and labeling

Data cleaning creates the foundations of successful AI implementation. This process finds and fixes errors, inconsistencies, and inaccuracies in datasets. Key cleaning operations include:

- Handling missing values based on AI-specific contexts

- Detecting and removing duplicates through fuzzy matching

- Standardizing data types and formats to ensure consistent processing

AI systems need data labeling to provide the “answers” machines need to learn effectively. Quality labeling helps systems recognize patterns and make accurate predictions, especially when you have supervised learning models.

Ensuring unbiased and representative data

Machine learning models often fail to make accurate predictions if you have underrepresented groups in training datasets. If data misrepresents reality through errors, gaps, or systematic bias, models inherit these weaknesses and can increase them at scale.

To curb this issue, use diverse training data that represents your entire user population. You can try dataset balancing techniques, but remember that removing large amounts of data might hurt overall model performance.

Advanced methods help identify and remove specific datapoints that contribute most to bias while preserving overall accuracy. This targeted approach works better than traditional data balancing methods because it keeps more training samples.

Your governance frameworks should define who can access specific datasets and under what conditions. This ensures your AI systems stay compliant with evolving regulations.

Build Scalable AI Infrastructure

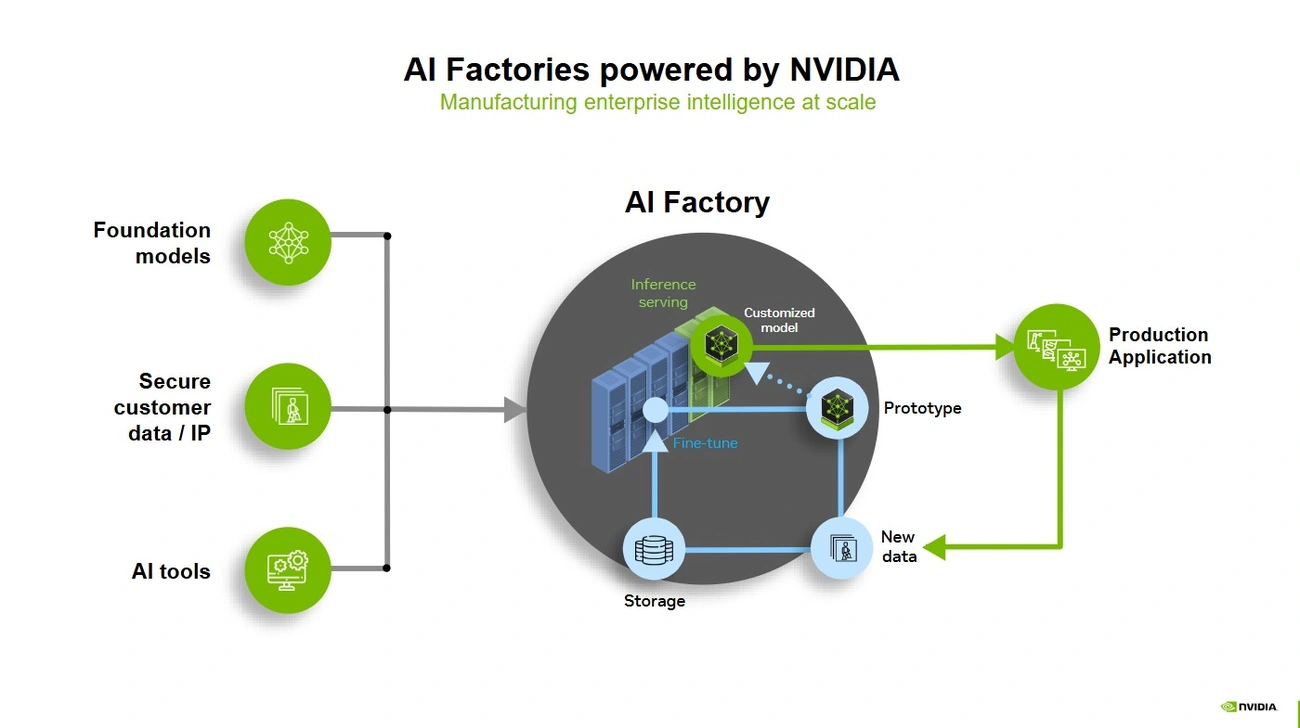

The success of AI deployment depends heavily on infrastructure choices. These decisions shape how well you can scale AI capabilities and achieve business goals that matter.

Cloud vs on-premise AI platforms

The location of AI systems is a fundamental choice organizations must make. Cloud-based AI comes with several benefits:

- Elasticity-spin up GPU clusters at the time you need them, shut them down when you don’t

- Access to state-of-the-art hardware without large investments

- Quick start without delays from facilities or data center upgrades

- Pay-as-you-use pricing eliminates upfront hardware costs

On-premise AI makes more sense in these scenarios:

- Your business faces strict data residency or compliance requirements

- You need consistent low latency for immediate applications

- Ownership becomes more economical with predictable long-term usage

- Your team needs complete control over the AI environment

Many organizations find success with a hybrid approach. This strategy combines both strengths—cloud handles variable training workloads while on-premises manages production inference with predictable costs.

Compute and storage requirements

AI workloads demand intensive resources and specialized hardware. GPUs remain essential for AI training, especially with deep learning models. CPUs handle the orchestration, data preprocessing, and lightweight inference tasks.

Storage becomes the first bottleneck in many cases. AI systems need massive capacity for datasets and high throughput to keep GPUs running efficiently.

Security and compliance considerations

Security must be built into AI infrastructure from day one. Studies show only 28% of companies embed security from the start. Traditional security approaches fail because AI data constantly moves between environments.

End-to-end encryption and strict access controls protect valuable AI assets. The digital world demands regular security audits and compliance with evolving data protection regulations.

Infrastructure needs change as AI implementations mature. A “secure by design” approach helps organizations maintain integrated defenses throughout the AI lifecycle.

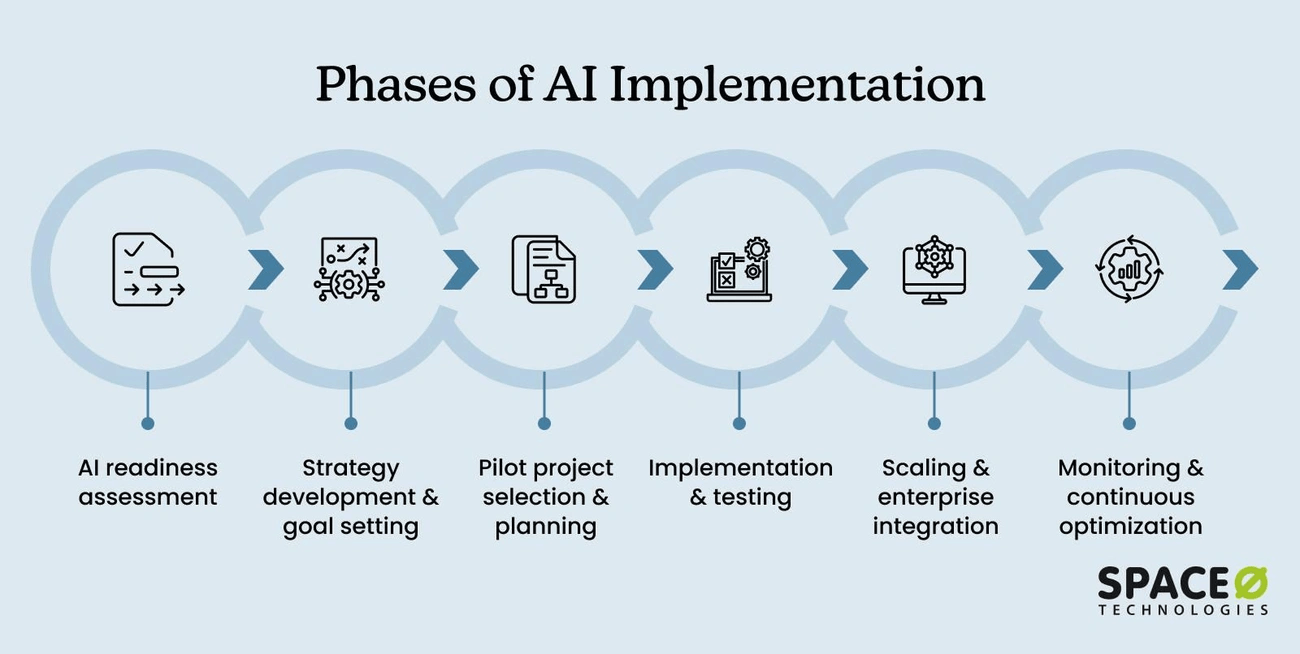

Develop a Phased AI Strategy Roadmap

A well-laid-out AI roadmap turns abstract goals into practical plans. Companies that use a phased approach get better results than those that treat AI like regular software development.

Discovery, pilot, scale, optimize

Successful enterprise AI deployments follow a proven four-phase pattern:

- Discovery Phase (3-6 months): Assess AI readiness, analyze processes, and teach executives about AI capabilities. This foundation includes strategy approval, team building, and budget planning.

- Pilot Implementation (8-16 weeks): Test AI projects in one or two promising areas to measure results and adjust systems. Teams gather stakeholder input during this key testing period before expanding.

- Complete Rollout (6-18 months): Scale AI across operations with human oversight and team collaboration. Teams focus on gradual deployment, user training, and process integration.

- Continuous Optimization: Track performance, collect feedback, update models, and explore new use cases.

Note that while executives often want ROI within six months, AI implementation usually takes 18-36 months.

Setting milestones and KPIs

Clear metrics are crucial to track progress. Your AI roadmap needs both technical and business KPIs to stay on course during implementation.

Technical metrics cover model accuracy and system uptime, but business outcomes matter most. Only 34% of managers use AI to create new KPIs, yet 90% of those who do see better results.

Companies that carefully track AI metrics perform better than those without structured measurement. An executive group should oversee KPI development that includes both improved data and smarter algorithms.

Aligning roadmap with business strategy

Your AI roadmap must connect with broader business goals. This arrangement helps AI initiatives drive strategy forward instead of just adding technology.

A step-by-step approach gives you better risk management, smarter resource use, and more stakeholder support. It also lets you learn and refine based on ground feedback.

After completing your roadmap, you need stakeholder approval. Show decision-makers the benefits, costs, and expected outcomes to get the budget you need.

Run Pilot Projects for Validation

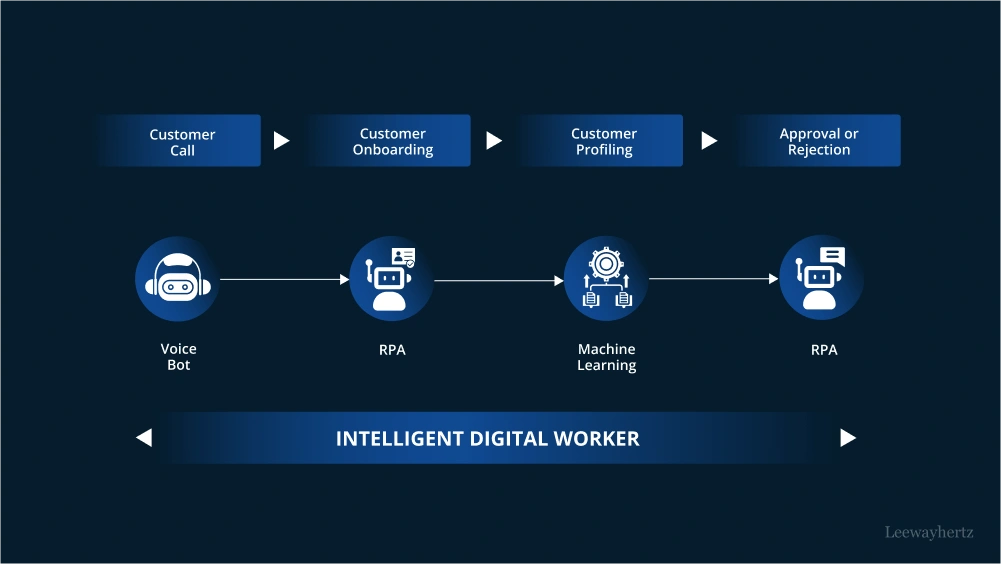

Image Source: LeewayHertz

Your AI strategy roadmap needs pilot projects as testing grounds. These controlled experiments let you test small-scale AI applications before full deployment. You’ll get a low-risk environment to assess capabilities and refine your approaches.

Selecting pilot use cases

The right use cases form the foundations of successful AI implementation. Your focus should be on finding opportunities that deliver “needle-moving” results to capture executive attention and support. Here’s what works best:

- Target narrow, well-defined workflows that use existing data

- Prioritize projects you can test with small, defined user groups

- Pick areas with clear training and onboarding paths

Startups see better ROI from AI because they have fewer established business processes to direct. Small-team and personal implementations work better than enterprise-wide initiatives when measuring early success.

Defining success metrics

You need clear validation frameworks that build transparency and stakeholder trust before launching your pilot. The best measurement combines technical and business metrics:

Technical metrics track model performance through indicators like accuracy, recall, and throughput. You should also monitor security metrics, including model robustness and explainability levels.

Business metrics are what matter most. These include:

- Time savings and productivity gains (marketing teams can cut content creation time from hours to minutes)

- Cost reductions through process optimization

- Better quality outputs (improved analysis, more complete research)

Companies often struggle with AI ROI measurement because they use industrial-era metrics for cognitive-era transformation. Time savings and productivity improvements give better insights than just focusing on revenue increases.

Documenting lessons learned

Your pilot needs careful records of data used, as input changes can affect outcomes by a lot. You should document all experiments—even the failures—since only 20% of developed AI models reach production.

This documentation helps inform future iterations and lets your organization learn from each experience while avoiding past mistakes.

Establish AI Governance Framework

Image Source: VerityAI

A strong governance framework serves as the backbone of reliable AI implementation. Statistics show that 60% of businesses aren’t ready for AI-increased cyber attacks. Your business needs proper oversight mechanisms.

Risk-based governance models

Risk levels should determine how you handle AI governance. The EU AI Act shows this by making high-risk AI systems meet strict standards for transparency, accountability, and human oversight. Your organization should match its oversight intensity to potential risks. You need to:

- Set up a designated governance body (technical board, council, or AI ethics office)

- Put systematic review processes in place for each AI use case

- Create clear accountability and escalation protocols

A Harvard expert puts it well: “When it comes to building an ethical AI strategy, it has to have teeth. There has to be some consequence”.

Ethical AI policies

Ethical principles should guide your AI implementation. UNESCO’s recommendation highlights human rights and dignity protection as the lifeblood of responsible AI. Their focus remains on transparency, fairness, and human oversight. Your policies must cover:

- Data privacy and security safeguards

- Fairness and bias alleviation protocols

- Transparency requirements for AI systems

- Human alternatives and fallback options

Regulatory compliance and documentation

The regulatory world of AI keeps changing faster. The EU, the United States, China, and many other countries are developing AI regulations. This complex situation makes detailed documentation crucial.

Your documents should show decision-making criteria, algorithmic logic, and risk assessments. Regular audits help you spot and alleviate potential compliance risks. These governance practices protect you from legal issues and build stakeholder trust. They also create foundations for sustainable breakthroughs as your AI implementation grows.

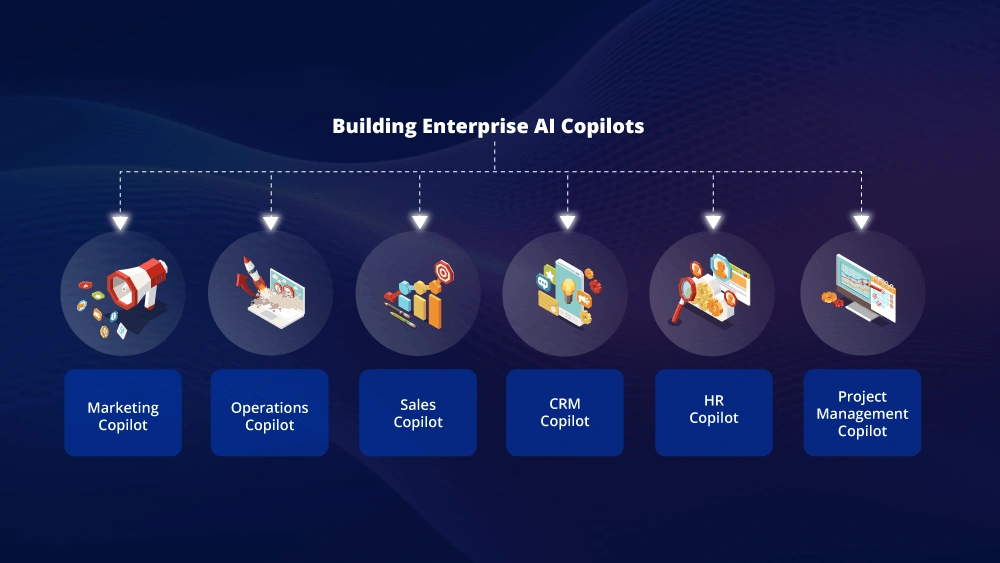

Integrate AI into Enterprise Systems

Your organization needs careful planning to make AI work with existing enterprise systems. A successful pilot should lead to careful integration of AI technology into your systems and processes.

API and data pipeline design

Good AI implementation needs reliable APIs that work for both machines and humans. By 2026, AI will become a default client for your systems, so your design principles should adapt. Your endpoints should express single intents with clear inputs and predictable outputs. Data pipelines should stay stable and expandable as storage environments evolve faster from on-premises to cloud and hybrid systems.

Ensuring system interoperability

System interoperability is essential when technical components need to talk to each other. AI can’t work alone-it needs data, workflows, systems, and people to work together. Open standards and frameworks that handle technical compatibility, security, and governance let AI agents access databases and applications without vendor lock-in. You should use open standards and protocols that help create uninterrupted integration.

Minimizing disruption during integration

A smooth integration depends on keeping existing workflows intact. You shouldn’t create new processes unless absolutely necessary. Here’s what you should do:

- Get a full picture of current systems before adding AI

- Roll out AI solutions step by step

- Work together with technology providers to ensure compatibility

This step-by-step approach keeps productivity high during changes and maximizes business results.

Also read: From Failed Pilots to Successful Enterprise AI Implementation: Real Data from 500+ Companies

Implement Change Management and Training

The human element remains central to successful AI implementation. Research shows 98% of employees believe they need reskilling due to generative AI, while 57% say their employer’s AI training falls short.

Building AI literacy

Successful AI adoption needs strong literacy as its foundation. Companies should design role-specific training programs that let employees experiment with AI tools hands-on. Organizations that let their staff practice with AI in risk-free environments see substantially faster adoption rates. The best training programs combine technical knowledge with real-world applications to help employees understand where human judgment matters most.

Addressing employee concerns

Organizations must tackle AI-related employee concerns head-on. About 73% of workers worry they won’t get enough training. Companies can overcome this resistance by:

- Acknowledging fears about job security

- Providing clear information about AI usage

- Showing how AI will increase rather than replace human capabilities

Creating AI champions

Natural influencers who become AI champions speed up adoption across organizations. These peer supporters demonstrate practical uses through micro-demos and office hours. McKinsey’s success came from an adoption team that profiled user types and customized training accordingly. This created a “snowball effect” of employee participation. These champions help translate AI strategy into daily work and transform experimenters into accelerators.

Monitor, Maintain, and Optimize AI Systems

The success of AI implementation depends on resilient monitoring and optimization practices. AI systems need constant oversight to maintain peak performance in ground conditions.

Performance monitoring tools

Detailed AI monitoring gives you visibility into your entire AI stack. The core team needs tools that track metrics like response time, quality, token usage, and infrastructure performance. These dashboards help teams spot problems quickly by showing the complete AI ecosystem on a single screen, from model responses to database connections and API calls. This visibility becomes crucial when AI systems move from isolated experiments to production scale because manual oversight becomes impossible.

Model retraining and drift detection

Model accuracy can degrade quickly as production data shifts away from training data—sometimes within days of deployment. Teams should set up automated drift detection systems that spot performance drops below set thresholds. The quickest way includes statistical metrics that compare data samples and model-based approaches that measure similarity against reference baselines. Organizations need trigger-based retraining protocols that balance scheduled updates with faster retraining when major drift occurs.

Continuous improvement loops

AI optimization needs structured feedback cycles. A four-stage pattern works best: Plan, Do, Check, Adjust. This method delivers measurable improvements and emphasizes progress over perfection. Teams should also think over AI-powered feedback mechanisms where models analyze their performance patterns, find mechanisms of issues, and suggest specific optimizations. Organizations create a cycle that builds on improvements over time by monitoring, maintaining, and optimizing AI systems.

Measure Business Impact and ROI

Measuring tangible outcomes is a vital final step for successful AI implementation. Companies that measure AI effects are five times more likely to effectively align incentives with objectives than those using traditional metrics.

Tracking technical and business KPIs

AI measurement success depends on tracking both operational and business value metrics. Common operational KPIs across industries include:

- Process effectiveness metrics – These metrics show how AI affects business processes and workflows

- Productivity value metrics – Direct improvements like reduced document processing times fall under this category

- Cost savings metrics – Efficiency gains from containment rates and reduced hiring needs demonstrate value

Business value KPIs convert operational improvements into financial results. Customer service teams should track metrics such as containment rates, average handle time, and customer satisfaction scores. Each AI implementation requires careful consideration since improving one KPI might affect another.

Reporting to stakeholders

Stakeholder communication requires translating technical achievements into business value. Research shows that 60% of survey respondents believe improving KPIs, not just performance against them, leads to better decision-making. Key reporting areas include:

- Risk reduction and governance improvements

- Innovation metrics showing new product development

- Customer experience improvements

Scaling based on success

Your AI strategy roadmap should change based on measured results. Teams need to establish clear KPI baselines before implementing AI. ROI calculations follow this formula: (AI Benefits - AI Costs) / AI Costs. A detailed evaluation should document both direct impacts and downstream effects that magnify results. This measurement approach creates the foundation for green AI implementation in business.

Comparison Table

| Implementation Step | Main Goal | Core Activities | Success Metrics | Common Challenges |

| Define Clear Business Objectives | Set precise targets for AI initiatives | Find specific problems, arrange with company goals, set KPIs | Measurable targets, KPIs you can measure | 95% of AI efforts fail without well-laid-out goals |

| Assess Organizational Readiness | Review readiness to deliver AI ambitions | Check AI maturity, find gaps, build executive support | AI maturity level (1-4), skills proficiency | 50% report skills gaps in teams |

| Identify and Prioritize Use Cases | Find high-value AI opportunities | Run discovery workshops, review value/effort, create balanced portfolio | ROI potential, feasibility scores | No coordinated oversight of pilots |

| Evaluate Data Availability and Quality | Build strong data foundation for AI success | Review data sources, clean/label data, check for bias | Data quality scores, representation metrics | Poor data quality costs $3T annually |

| Build Adaptable AI Infrastructure | Create resilient technical foundation | Choose platforms, plan compute/storage, implement security | System performance, security compliance | Only 28% build in security from start |

| Develop Phased AI Strategy Roadmap | Create clear implementation plan | Plan phases, set milestones, match with strategy | Progress against timeline, KPI achievement | 18-36 month typical timeline vs 6 month executive expectations |

| Run Pilot Projects for Validation | Test AI applications in controlled setting | Pick use cases, set metrics, document learning | Technical and business metrics achievement | Only 20% of AI models reach production |

| Establish AI Governance Framework | Build oversight systems | Create policies, ensure compliance, manage risks | Compliance rates, risk assessments | 60% not ready for AI security risks |

| Integrate AI into Enterprise Systems | Link AI with existing infrastructure | Design APIs, ensure systems work together, reduce disruption | System integration success, workflow continuity | Complex integration with older systems |

| Implement Change Management | Drive organizational adoption | Improve AI knowledge, address concerns, create champions | Employee adoption rates, training completion | 57% report poor AI training |

| Monitor, Maintain, Optimize | Keep AI performing well | Watch metrics, spot drift, make improvements | System uptime, model accuracy | Model drift can happen within days |

| Measure Business Impact and ROI | Show AI implementation value | Track KPIs, update stakeholders, grow success | ROI calculations, business value metrics | Hard to link technical and business metrics |

Key Takeaways

Successful AI implementation requires a structured 12-step approach that transforms abstract potential into measurable business value through careful planning and execution.

- Start with clear business objectives, not technology – 95% of AI efforts fail due to undefined goals; focus on specific problems AI can solve rather than adopting technology for its own sake.

- Assess organizational readiness before implementation – Only 7% of enterprises reach AI maturity; evaluate current capabilities, identify skill gaps, and secure executive sponsorship early.

- Prioritize data quality as your foundation – Poor data costs businesses $3 trillion annually; clean, unbiased, representative datasets are essential before any model training begins.

- Follow a phased approach: Discovery, Pilot, Scale, Optimize – Realistic AI timelines span 18-36 months; start with controlled pilots before enterprise-wide deployment.

- Implement robust governance frameworks from day one – Only 28% of companies embed security from the start; establish ethical policies and compliance protocols to protect against risks.

- Focus on change management and human adoption – 98% of employees need AI reskilling, yet 57% report inadequate training; build AI literacy and address concerns to ensure successful adoption.

Conclusion

This piece outlines a detailed 12-step framework that helps enterprises successfully implement AI in their software. The trip starts when you define clear business goals and ends with measuring real business effects. A structured path lies between these significant points that turns abstract AI potential into ground value.

Companies that use this step-by-step approach perform better than those that treat AI like regular software development. Each step builds on the previous one and creates a foundation for lasting adoption instead of scattered experiments. This approach needs time, but quick implementation without proper planning can get pricey.

AI implementation is a human-centered effort at its core. Teams need proper training, clear communication, and strong executive support. Even technically perfect AI systems will struggle to deliver value without addressing how people adapt to change.

Quality data forms the foundation of working AI. Bad data costs businesses trillions each year and remains one of the main reasons AI projects fail. Strong data foundations must be a priority before any model training starts.

Governance frameworks protect companies while enabling new ideas. As regulations change worldwide, companies with structured oversight gain advantages through faster, more responsible deployment.

Enterprise AI success might look overwhelming, but this roadmap shows a tested way forward. Starting small, measuring progress, and scaling based on proven results helps your company avoid common issues that stop most AI projects.

You now have a practical blueprint to turn AI goals into a business reality. Your company needs to implement AI – the question is how carefully you’ll plan this trip. Will you use this structured method or risk joining others whose AI projects fail to show real results?

The choice is yours, and the path to success is clear. Your AI transformation awaits.

FAQs

What is the projected state of AI in business by 2026?

By 2026, worldwide spending on AI is forecast to reach USD 2.52 trillion, a 44% year-over-year increase. Gartner predicts that by 2030, AI will account for nearly all of IT spending, indicating a significant growth in AI adoption across businesses.

How many stages are typically involved in AI development?

AI development typically involves several key stages, including data preparation, model selection, training, validation, testing, and deployment. Each stage is crucial for creating effective AI systems that can be successfully implemented in real-world scenarios.

What advancements in AI are expected by 2026?

By 2026, AI is expected to evolve towards true collaboration between technology and people. The focus will shift from AI simply answering questions and reasoning through problems to more advanced forms of human-AI interaction and partnership.

What are the essential steps in AI implementation for businesses?

Key steps in AI implementation include defining clear business objectives, assessing organizational readiness, identifying use cases, evaluating data quality, building scalable infrastructure, running pilot projects, establishing governance frameworks, and measuring business impact.

How can businesses measure the success of their AI implementations?

Businesses can measure AI success by tracking both technical and business KPIs. This includes monitoring operational metrics like process effectiveness and productivity improvements, as well as business value metrics such as cost savings, revenue growth, and customer satisfaction scores. Calculating ROI and reporting tangible outcomes to stakeholders are crucial for demonstrating AI’s impact.