AI implementation in enterprises faces a harsh reality check. Gartner predicts that companies will discard over 40% of AI projects by 2027. Business leaders rank AI as their top strategic priority, yet only 25% see substantial value from their investments.

Our analysis of 500+ companies reveals why AI implementation strategies often fail at the pilot stage. The numbers paint a grim picture – companies successfully deploy only 4 out of 33 AI prototypes into production. This translates to an 88% failure rate when scaling AI initiatives. Quality issues with data make this problem worse, as 81% of AI professionals report such problems in their organizations. Leadership seems unaware or unconcerned, as 85% of professionals feel these problems go unaddressed.

Many companies share your struggle to move beyond proofs of concept. About two-thirds of businesses remain stuck at this stage and can’t transition to full operation. Deloitte’s 2025 CFO Survey shows that less than 40% of automation initiatives create measurable value. McKinsey’s research adds that only 30% of AI pilots achieve scaled impact.

This piece explores why BCG research found that only 11% of companies tap into AI’s full potential. We’ll share useful insights to help you scale your AI initiatives from pilot to production based on ground data.

Table of Contents

Why Most AI Pilots Fail to Scale

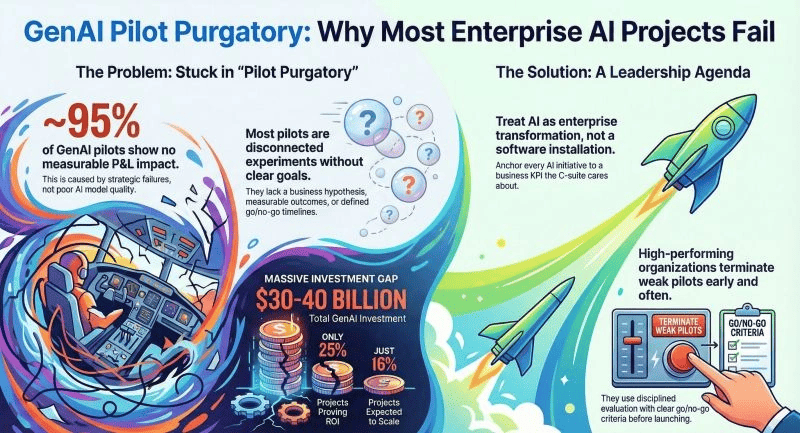

Companies face many roadblocks when trying to turn promising AI pilots into full-scale enterprise solutions. Research from MIT shows that only 5% of generative AI pilots achieve quick revenue growth. Most of them stall before they can show measurable results. This high failure rate doesn’t come from poor models. The real culprit lies in systemic problems that companies don’t deal with very well when scaling their AI.

Business goals and pilot objectives don’t match

The biggest problem in enterprise AI implementation comes from misaligned business goals. Companies often start AI projects without linking them to specific business outcomes. They spend nearly half their generative AI budgets on sales and marketing tools. Yet MIT research shows the best ROI actually comes from back-office automation. This mismatch between spending and potential value makes projects stall.

Things get worse when executives and implementation teams have different priorities. About 20% of infrastructure leaders say they don’t clearly understand what their CEO or CIO wants. Plus, 37% describe their work as reactive rather than strategic. This communication gap creates situations where pilots work well in controlled settings but fail to meet real business needs at scale.

BCG research backs this up. Their findings show that 70% of AI implementation problems come from people and processes, not technology limits. A successful enterprise AI strategy must start with business KPIs and measurable outcomes, not just model accuracy or technical metrics.

Data quality issues and department silos

Data quality might be the most important yet overlooked part of enterprise AI implementation. About 81% of AI professionals admit their companies still have big data quality problems. Even more worrying, 85% think leadership isn’t fixing these issues. Poor quality data makes even the best models unreliable.

Data silos make this problem worse by preventing companies from seeing their complete information picture. These isolated data stores usually mirror how organizations are structured, with information split between business units or product groups. Teams face several challenges:

- They can’t resolve duplicate data easily

- Missing information creates gaps in analysis

- Data formats don’t match across departments

- They struggle to enforce rules across systems

Disconnected data pipelines cause AI models that worked in pilot tests to fail with production data. McKinsey reports that up to 90% of ML development failures happen not because of bad models but because of poor production practices and integration issues. Models that work perfectly in controlled settings break down when they meet ground data conditions.

Weak infrastructure and missing MLOps pipelines

The tech supporting AI projects often can’t handle production environments. Only 38% of infrastructure and operations leaders think their current setup can meet AI’s demands. This technical gap becomes obvious when moving from small tests to full implementation. Companies often need to rework code, switch frameworks, and do major engineering work.

ML models work differently from regular software. They need constant monitoring and adjustments as conditions change. Without strong MLOps practices, models get worse over time and lose accuracy. MLOps provides essential tools for:

- Automated model training pipelines

- Version control for models and data

- Continuous integration and deployment

- Performance and drift monitoring

- Rollback and retraining mechanisms

Companies without these capabilities can’t maintain their original pilot success. The lack of feature stores – central vaults for managing model features – makes scaling harder because teams can’t ensure consistent data across environments.

Companies need to tackle these three core challenges through a strategic approach that combines aligned business goals, better data quality, and strong technical infrastructure. Without this foundation, the gap between AI’s potential and actual value will keep growing.

Enterprise AI Implementation Challenges Identified in 500+ Companies

Our data analysis of 500+ companies shows that successful enterprise AI implementation faces more than technical obstacles. Technical, organizational, and leadership challenges create a complex environment that organizations must learn to direct.

Top 5 technical blockers: integration, drift, latency, etc.

Technical hurdles in enterprise AI implementation begin with integration challenges. Companies find it hard to integrate AI with their existing systems – over 90% report this problem. The challenge grows bigger when companies try to connect AI models with complex, aging IT systems that weren’t built for modern AI applications.

Model drift is another critical barrier. Machine learning models lose their edge as they age because data patterns and relationships between variables change. Model accuracy can drop within days after deployment. Many organizations lack the right tools to spot and fix this issue.

Latency becomes the third major roadblock, especially with inference workloads. Performance and ROI suffer from every millisecond delay between GPU, storage, and network hops. Real-time AI applications face these challenges:

- Data travel distance affects performance

- Network speed suffers from processing power limits

- Overheated connections drop sessions

- Everyone’s performance drops under capacity strain

Data quality stands as the fourth big technical challenge. IBM research shows 72% of CEOs know proprietary data holds the key to AI value. Yet many companies work with incomplete, outdated, or isolated datasets. Poor quality data makes even the best models unreliable.

Infrastructure limits block progress, too. Only 38% of infrastructure and operations leaders think their systems can handle AI’s demands. This shortfall becomes clear when scaling from small projects to company-wide implementation.

Organizational resistance and change management issues

Technical barriers aren’t the only hurdle – organizational resistance poses equal challenges. About 70% of change programs fail because employees push back and management support falls short. By 2023, only 43% of employees said their company handled change well, down from 60% in 2019.

Job loss fears drive much of this resistance. Workers worry AI will make their skills obsolete. People also distrust AI technologies because of algorithm bias concerns and AI’s “black box” decision-making.

Bad communication makes things worse. Leaders say they involve employees in change (74%), but only 42% of employees feel included. This gap creates an environment where AI projects struggle whatever their technical merit.

Leadership gaps and unclear success metrics

Leadership issues might be the biggest challenge in enterprise AI implementation. Half of AI decision-makers say they can’t estimate or show AI’s value. Projects fall into “AI hype traps” like using too many resources or growing too big without clear metrics.

BCG reports that 74% of companies fail to get real value from AI even after two years. MIT’s research shows 95% of AI pilots never grow beyond experiments. This pattern shows leaders don’t know how to define success.

Board directors with AI expertise made up just 2% of boards in 2025. People making big investment choices don’t understand what AI can and can’t do. As a result, 51% of managers and employees say leaders fail to set clear success metrics for change.

McKinsey’s research confirms that measuring success beyond technical metrics poses the real challenge. Their report puts it well: “Our ability to cross that chasm from inward-focused technical metrics into more business value metrics is what’s going to make us very successful”.

Strategic Blueprint for Moving from Pilot to Production

Companies need a well-laid-out approach to move their AI projects from pilot to production systems. Organizations that follow a systematic enterprise AI implementation strategy see an average ROI of 28%, with returns reaching up to 149%. Teams achieve this success through faster deployment cycles, better governance, and smooth collaboration.

Start with business KPIs, not just model accuracy

Clear business objectives, not technical metrics, form the foundation of successful enterprise AI implementation. Organizations that develop forward-looking KPIs with AI see better strategic results. Teams should focus on these priorities instead of just model accuracy:

- Revenue growth and cost reduction metrics

- Customer experience improvements

- Process efficiency gains

- Working capital optimization

- Risk reduction measurements

Leaders call these metrics an “enterprise GPS” that shows teams their current position, destination, and the path forward. Call containment rates, average handle time, and customer satisfaction scores help calculate the business effects across different use cases.

AI projects that lack business focus tend to fail. You should identify specific challenges to solve, get key stakeholders to support these goals, and make people accountable for results.

Build scalable data pipelines and MLOps early

Your first production deployment should include MLOps infrastructure investment. Industry standards show that companies with formal MLOps and data governance cut their model time-to-production by 40%.

Simple practices like version control for code and datasets, reproducible environments, and basic continuous integration pipelines work well to start. More advanced features like model registries, automated retraining pipelines, and detailed monitoring systems can follow.

A good MLOps framework covers the complete machine learning lifecycle from data preparation to deployment and monitoring. This approach matters because studies show 47% of ML projects never leave testing, and 28% of the remaining ones still fail.

MLOps fixes two main issues: it makes sure high-quality models work as intended and lets teams spot changes before problems occur. Teams can work better together as MLOps breaks down barriers between operations and data groups that often slow down enterprise AI implementation.

Design for modularity and reuse across departments

The future of AI leadership depends more on building reusable, machine-readable intelligence than creating individual models. One-off solutions lead to dead ends, while modular AI components open doors for company-wide use.

Modular AI solutions adapt to new challenges while meeting strict standards. To name just one example, teams can explain and adapt each component by separating core AI logic from specific applications. This method helps organizations:

- Deploy AI across multiple business areas without recreating core functionality

- Maintain consistent governance across implementations

- Scale solutions more efficiently with reduced development costs

- Adapt to changing business needs with minimal rework

Design your pipelines with reuse in mind to achieve this modularity. One expert suggests, “Keep it nice and small; build frameworks that can be run safely but united for efficiency”. This strategy helps future-proof your AI infrastructure through standalone implementation layers that run in parallel or step by step.

The true measure of AI maturity isn’t the number of experiments but how well they scale. The question becomes “Can it work again, with half the effort?” rather than “Did it work once?”.

Building a Cross-Functional AI Taskforce

The success of enterprise AI implementation depends on bringing together the right people. Companies with strategically structured cross-functional teams see their implementation success rates rise by over 60%.

Roles needed: ML engineers, domain experts, product owners

AI taskforces need specific roles that work together smoothly. Machine learning engineers scale AI models in production environments. They build resilient recommendation systems and inventory algorithms. Data scientists look for patterns and build predictive models to forecast, segment customers, and optimize prices. Data engineers design vital data infrastructure and pipelines that connect systems, though teams often overlook their importance at first.

Domain experts bring essential subject matter knowledge to the table. SymphonyAI found that products built with input from business leaders who solved ground challenges perform better than those developed without domain expertise. These experts spot potential biases, know which questions come up often, and help data scientists structure models correctly.

Product owners bridge the gap between business and technical teams. They set the scope, convert merchandising needs into AI requirements, and pinpoint areas where AI adds the most value. They also keep track of AI-related tasks and work together with data and development teams. This role becomes more vital as 88% of companies plan to boost their organizational AI training.

Creating shared ownership between IT and business units

Strong business-IT relationships move beyond the “order-taker” model. Both groups share responsibility for outcomes. Teams should focus on customer and business results instead of separate technical goals. To name just one example, see how an insurance company set the goal “Customers love our mobile experience.” Their key results included “20% mobile customer NPS improvement” (business metric) and “30% Flow Time reduction for features” (technology metric).

Companies should replace temporary project teams with stable, cross-functional product teams that combine business and technology roles. The funding model needs to change from project-based to product-based allocation. Teams review and adjust based on outcomes every quarter. One industry expert points out, “Value doesn’t emerge from the model sitting on a server somewhere. It emerges when AI capabilities meet real business context”.

Upskilling internal teams for AI literacy

Companies must boost AI literacy across their workforce to stay competitive. IBM research shows that executives should improve AI capabilities throughout the organization. They need to help employees develop new skills as AI takes over previously human-handled tasks. Teams should focus on computer vision, generative AI, machine learning, natural language processing, and robotic process automation.

Organizations that see upskilling as just another training program miss the point – it’s a change management effort at its core. Training alone rarely creates lasting behavior change. Successful companies weave upskilling into daily work and connect it to clear career paths.

Leaders should demonstrate adoption because employees watch how leadership uses and talks about AI. Companies need to add AI assistants into workflows, show supervisors how to adopt these tools, update performance metrics to reward experimentation, and build peer-led support communities.

These strategic approaches to team building help enterprises overcome people-related challenges that often stop AI initiatives. They build a foundation for lasting implementation success.

Governance, Ethics, and Compliance in Enterprise AI

The future success of enterprise AI depends on reliable governance structures. Companies that deploy sophisticated algorithms need to balance state-of-the-art solutions with responsible practices.

Implementing explainable AI (XAI) for transparency

XAI builds trust in enterprise AI implementation. It helps human users understand machine learning outputs and promotes trust, model auditability, and productive AI use. XAI alleviates compliance, legal, and reputational risks that come with “black box” systems. Teams often hesitate to adopt traditional AI models because they work like opaque decision-makers.

XAI implementation needs three techniques:

- Prediction accuracy that determines model reliability

- Traceability that limits decision-making scope

- Decision understanding that addresses human interpretation needs

This transparency encourages regulatory compliance, especially in sectors where laws like the EU AI Act require explainability.

Data privacy and regulatory compliance frameworks

Regulatory compliance has become a top priority in enterprise AI strategy. The EU AI Act bans certain AI uses and enforces strict governance rules for others. High-risk AI systems face tough data governance requirements. The US lacks nationwide AI laws, but some states have created their own regulations, like the California Consumer Privacy Act.

Companies need privacy practices that include data minimization, retention schedules, and consent mechanisms. Security best practices like encryption, anonymization, and access controls protect against data leaks. These safeguards help companies deal with one of their biggest challenges: staying compliant with changing regulations.

Establishing AI ethics boards and audit trails

AI ethics boards protect responsible AI deployment. These advisory groups interpret ethical principles and apply them to specific projects that match company values. Only 28% of companies using AI have central systems to track model changes, versions, and decision logs.

Detailed audit trails capture the complete AI lifecycle. This includes data lineage, model versions, testing logs, approval workflows, and deployment feedback. These trails tell the story of AI systems from start to present and connect technical details to governance processes. A study of 500+ companies shows that those with ethics boards handle implementation challenges better and adapt faster to regulations.

Case Studies: How Leading Enterprises Escaped Pilot Purgatory

Ground success stories are a great way to get insights into making enterprise AI work. These three examples show how companies tackled common challenges and achieved meaningful results.

JPMorgan’s COIN: Automating legal document review

JPMorgan’s Contract Intelligence (COIN) platform reshapes the scene for labor-intensive processes. The company’s lawyers previously spent 360,000 hours each year reviewing commercial loan agreements. COIN reduced this time to seconds after deployment. The platform uses machine learning algorithms that identify patterns in agreements and automatically classify clauses into roughly 150 different attributes. This implementation saved time and cut compliance-related errors by approximately 80%.

HCA’s SPOT: Reducing sepsis mortality with AI

HCA Healthcare created Sepsis Prediction and Optimization of Therapy (SPOT) to tackle sepsis, which claims 270,000 American lives annually. This algorithm-driven system tracks vital signs, lab results, and nursing reports. The system detects sepsis about 18 hours earlier than the best clinicians. SPOT’s clinical results helped save an estimated 8,000 lives over five years. The mortality rates dropped 9.9% for not-present-on-admission severe sepsis after deployment.

Ford’s predictive maintenance: Lessons from failure and recovery

Ford’s predictive maintenance implementation shows both challenges and victories. Their AI model predicted 22% of certain failures 10 days before they happened. This single application saved 122,000 hours of vehicle downtime, which equals about $7 million in potential savings. The company’s “Miniterms 4.0” system generated more than $1 million in savings during its first year by predicting manufacturing equipment slowdowns.

Conclusion

The reality of enterprise AI implementations is troubling – 88% of initiatives fail to scale beyond the pilot stage. Data from over 500 companies reveals a clear pattern that successful AI deployment needs more than just technical excellence.

Business alignment serves as the lifeblood of AI implementation that works. Companies should define clear, measurable business KPIs before they begin any AI project. Even technically brilliant solutions will struggle to deliver tangible value without this foundation.

Data quality remains a persistent challenge, with 81% of AI professionals reporting systemic problems. Organizations should build reliable data pipelines and MLOps capabilities early instead of treating them as afterthoughts.

Teams need cross-functional collaboration. ML engineers, domain experts, and product owners working together consistently outperform siloed approaches. This creates shared ownership between technical and business teams and breaks down traditional barriers that typically halt AI progress.

Success stories from JPMorgan, HCA Healthcare, and Ford show how organizations can overcome implementation hurdles by following these principles. Their experiences highlight AI’s tremendous potential when deployed strategically and responsibly.

The path from pilot to production remains challenging. Companies that establish proper governance frameworks, invest in explainable AI, and maintain regulatory compliance position themselves to achieve lasting success.

Your organization can escape “pilot purgatory” by focusing on proven strategies. Clear business objectives, reliable infrastructure, collaborative efforts, and responsible governance practices make the difference. The trip might seem daunting now, but these practical approaches will change your AI initiatives from experimental projects into powerful business assets that deliver measurable enterprise value.

Key Takeaways

Analysis of 500+ companies reveals critical patterns for transforming failed AI pilots into successful enterprise implementations that deliver measurable business value.

- 88% of AI pilots fail to scale: Most organizations get stuck in the proof-of-concept phase due to a lack of business alignment, data quality issues, and infrastructure gaps rather than technical model problems.

- Start with business KPIs, not model accuracy: Successful implementations prioritize revenue growth, cost reduction, and customer experience metrics over technical performance to ensure strategic alignment.

- Build MLOps infrastructure early: Organizations with formalized MLOps and data governance reduce model time-to-production by 40% and avoid the common trap of non-scalable solutions.

- Cross-functional teams increase success by 60%: Combining ML engineers, domain experts, and product owners creates shared ownership between IT and business units, breaking down silos that typically halt progress.

- Data quality remains the biggest blocker: 81% of AI professionals acknowledge significant data quality issues, yet 85% report leadership isn’t addressing these problems that undermine even sophisticated models.

The companies that escape “pilot purgatory” follow a systematic approach: they align AI initiatives with clear business outcomes, invest in scalable infrastructure from day one, and foster collaboration between technical and business teams while maintaining robust governance frameworks.

Also read: Why Agentic AI Makes Current Software Services Obsolete in 2026

FAQs

Why do most AI pilots fail to scale in enterprises?

Most AI pilots fail to scale due to a lack of business alignment, data quality issues, and infrastructure gaps. Organizations often struggle to connect AI initiatives with specific business outcomes, face challenges with siloed or inconsistent data across departments, and lack the necessary MLOps pipelines for production deployment.

What are the key components of a successful enterprise AI implementation strategy?

A successful enterprise AI implementation strategy includes starting with clear business KPIs rather than just model accuracy, building scalable data pipelines and MLOps infrastructure early, and designing for modularity and reuse across departments. It also involves creating cross-functional teams and establishing proper governance frameworks.

How can organizations overcome data quality challenges in AI implementation?

To overcome data quality challenges, organizations should prioritize building robust data pipelines, implement data governance practices, and invest in data cleaning and preparation tools. It’s also crucial to break down data silos across departments and ensure consistent data formats and quality standards throughout the organization.

What roles are essential in a cross-functional AI taskforce?

A cross-functional AI taskforce typically includes ML engineers, data scientists, data engineers, domain experts, and product owners. This diverse team composition ensures a balance of technical expertise, business knowledge, and strategic oversight necessary for successful AI implementation.

How can enterprises ensure ethical and compliant AI implementation?

To ensure ethical and compliant AI implementation, enterprises should focus on implementing explainable AI (XAI) for transparency, adhering to data privacy and regulatory compliance frameworks, and establishing AI ethics boards. Creating comprehensive audit trails and fostering a culture of responsible AI use are also crucial steps.