Table of Contents

Key Takeaways

Before you read, here’s what you’ll learn:

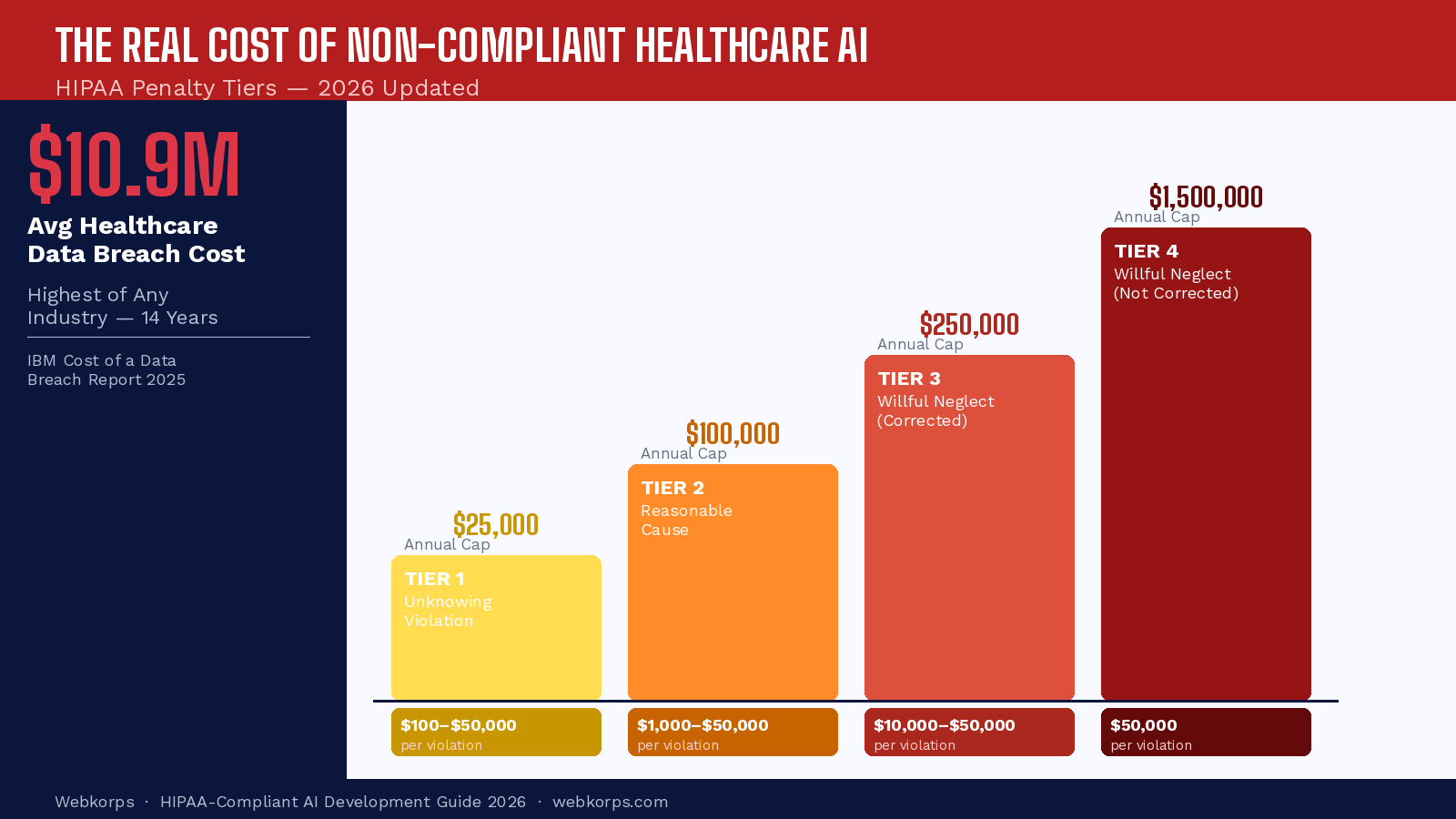

- HIPAA violations related to AI and data breaches cost healthcare organizations an average of $10.9 million per incident in 2025, the highest of any industry for 14 consecutive years.

- Only 34% of healthcare AI software vendors pass a comprehensive HIPAA Business Associate Agreement (BAA) audit on the first attempt.

- HIPAA-compliant AI development requires 3 layers of protection: technical safeguards, administrative safeguards, and physical safeguards, applied specifically to AI pipelines, not just databases.

- De-identification of PHI training data is the #1 compliance gap in healthcare AI projects; most teams discover this only after model training is complete.

- Organizations that embed HIPAA compliance from Day 1 of development spend 60% less on remediation than those who retrofit compliance after launch.

The AI Compliance Crisis No One Talks About

In 2024, a mid-sized regional hospital network received a $4.75 million HIPAA penalty, not because of a ransomware attack or an insider breach. The fine came because their AI diagnostics vendor had been processing patient data without a signed Business Associate Agreement (BAA). The vendor’s platform was hosted on a HIPAA-compliant infrastructure. The AI model was cutting-edge. The results were clinically promising. But the absence of a single signed document cost nearly $5 million and triggered an 18-month corrective action plan.

This scenario is becoming increasingly common as healthcare organizations rush to adopt artificial intelligence. The AI in healthcare market is projected to reach $188 billion by 2030, growing at a CAGR of 37%. Yet the compliance frameworks governing how that AI must handle patient data have struggled to keep pace.

The core problem is a dangerous misconception: many development teams believe that using HIPAA-compliant cloud storage, an AWS S3 bucket with encryption enabled, and a Google Cloud Healthcare API is sufficient for their AI system to be HIPAA-compliant. It is not. HIPAA compliance in the context of AI software development is a far deeper, multi-layered obligation that spans data pipelines, model training processes, inference environments, output handling, vendor agreements, and organizational governance.

This guide is written for CTOs, healthcare IT directors, software architects, and development companies building AI products for healthcare clients. By the end, you will have a precise understanding of what HIPAA demands from an AI system, where most healthcare AI projects fail compliance, how to architect a truly compliant AI pipeline, and a step-by-step checklist to validate your compliance posture before launch.

What HIPAA Actually Means for AI Systems

Most developers learn HIPAA as a data storage problem: encrypt the database, restrict access, and sign some agreements. That framing was adequate for traditional healthcare software. AI changes the rules entirely, and most teams don’t realize how much until they’re already in trouble.

The 3 HIPAA Rules That Directly Govern AI Development

- Privacy Rule: Defines what constitutes Protected Health Information (PHI) and establishes when it can be used, disclosed, or accessed. For AI systems, this becomes complex because the Privacy Rule’s definition of PHI is broader than most engineers assume. Any individually identifiable health information, including data used to train an AI model, falls under this rule.

- Security Rule: Specifies technical, administrative, and physical safeguards for electronic PHI (ePHI). Critically, these safeguards must apply not just to where data is stored, but to every system that creates, receives, maintains, or transmits ePHI, including your AI model’s inference API, training pipeline, and output storage.

- Breach Notification Rule: Requires covered entities and business associates to notify affected individuals, the Department of Health and Human Services (HHS), and in some cases the media within 60 days of discovering a breach of unsecured PHI. When an AI model leaks or improperly discloses PHI through a prompt injection attack, model inversion, or misconfigured API, this rule is triggered.

What Counts as PHI in AI? The Part Most Teams Get Wrong

HIPAA identifies 18 specific categories of Protected Health Information. What many AI developers miss is that PHI does not require a patient name to be present. The combination of seemingly anonymous data points can constitute PHI.

Consider: a ZIP code, date of birth, and a diagnosis code together create a unique enough combination to identify an individual in a small geographic area. If your AI model was trained on or processes this combination, you are handling PHI, regardless of whether the patient’s name appears anywhere in the data.

| PHI Category | Example in AI Context | Risk Level |

| Names | Patient name in training data or chatbot context | 🔴 Critical |

| Geographic data (ZIP code) | ZIP codes in predictive models | 🔴 Critical |

| Dates (DOB, admission) | Date features in ML training datasets | 🟠 High |

| Phone/Fax numbers | Contact data in scheduling AI systems | 🟠 High |

| Email addresses | Patient emails in AI communication tools | 🟠 High |

| Medical record numbers | Record IDs used as training data keys | 🔴 Critical |

| Health plan numbers | Insurance IDs in RCM AI tools | 🟠 High |

| IP addresses | Patient portal access logs used in AI | 🟡 Medium |

| Device identifiers | Wearable health device data in AI models | 🟠 High |

| Biometric data | Voice patterns in ambient documentation AI | 🔴 Critical |

| Photographs | Medical images in diagnostic AI | 🔴 Critical |

| Diagnosis/treatment data | Clinical notes in LLM fine-tuning | 🔴 Critical |

Who Is a Business Associate in the AI World?

A Business Associate is any individual or entity that creates, receives, maintains, or transmits PHI on behalf of a covered entity. In the context of AI software development, this definition has broad implications.

- Your AI model vendor: if their platform processes patient data, they are a Business Associate and must sign a BAA.

- Cloud AI API providers: sending patient data to OpenAI’s consumer API, Google’s standard Gemini API, or Anthropic’s standard API without an enterprise BAA is a HIPAA violation.

- Your ML infrastructure provides: the company hosting your model training environment, serving your inference API, and storing your model artifacts.

- Your data labeling partner: if human annotators review PHI to label training data, they are Business Associates.

- Your monitoring and observability tool: if your AI performance monitoring tool logs model inputs that contain PHI, it must have a BAA.

Critical Misconception

- The cloud is HIPAA-compliant, but that does NOT mean your AI application is HIPAA-compliant.

- AWS, Azure, and Google Cloud will sign BAAs, but a BAA only covers the infrastructure they manage.

- Your AI code, data pipelines, model training process, output handling, and all third-party AI tools you integrate are YOUR responsibility.

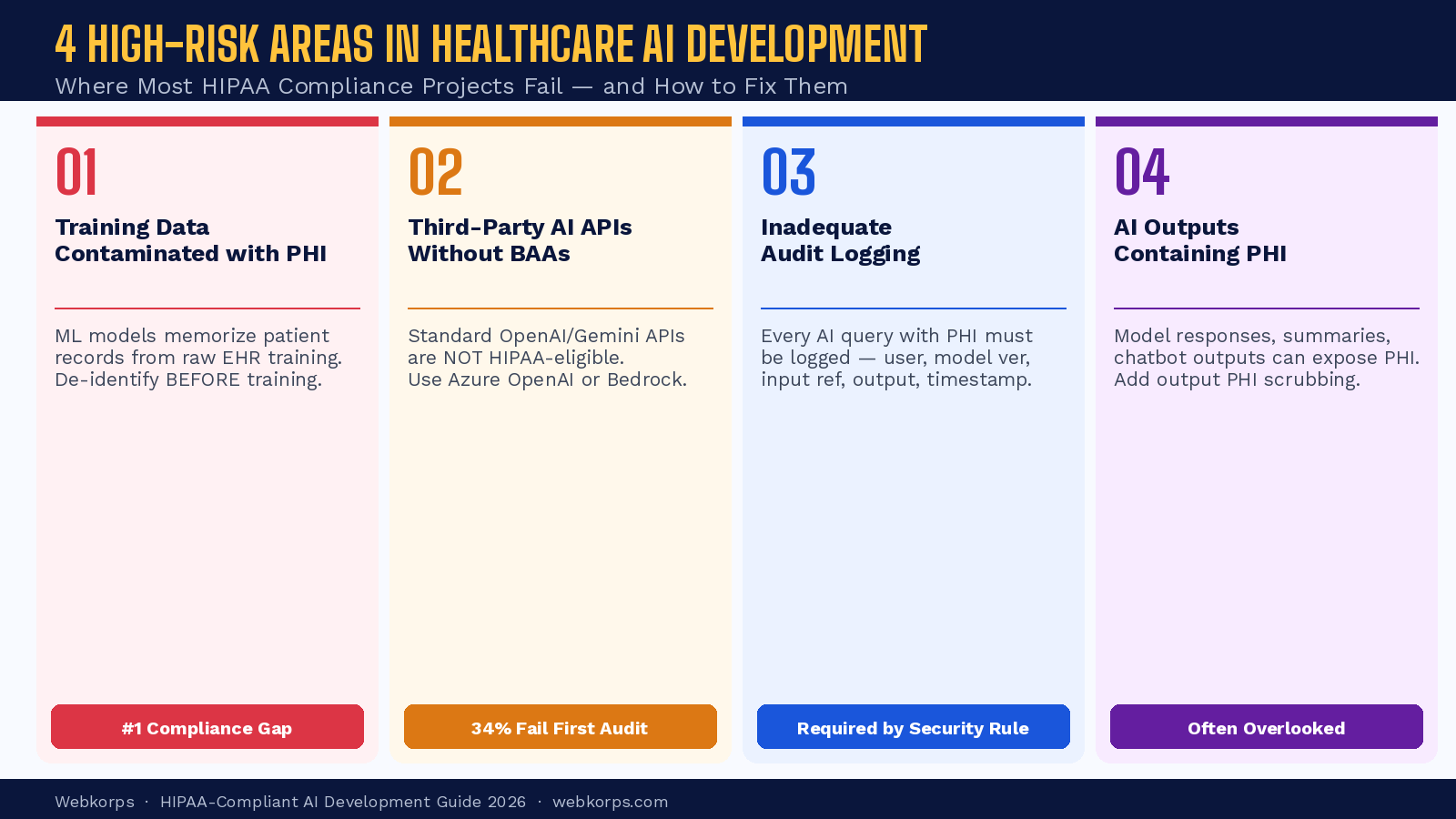

The 4 High-Risk Areas Where Healthcare AI Projects Fail HIPAA Compliance

Based on analysis of OCR enforcement actions and industry compliance audits, four specific failure points account for the majority of HIPAA violations in healthcare AI projects. Understanding these is the first step to avoiding them.

Risk Area 1: Training Data Contaminated with PHI

The most common and costly compliance failure in healthcare AI development is building machine learning models on raw, un-de-identified patient data extracted directly from EHR systems or data warehouses.

The problem is compounded by a technical reality that most non-ML engineers don’t appreciate: machine learning models can memorize training data. This phenomenon, known as training data memorization, means that a model fine-tuned on real patient records can reproduce portions of those records when prompted in specific ways. This is not theoretical. Researchers have demonstrated that large language models trained on clinical data can be induced to reproduce patient-specific information through carefully crafted prompts, a technique called a model inversion attack.

The Two HIPAA-Approved De-identification Methods:

- Safe Harbor Method: Remove all 18 PHI identifiers from the dataset. The organization must also have no actual knowledge that the remaining information could be used to identify an individual.

- Expert Determination Method: A qualified statistical or scientific expert certifies, using generally accepted statistical and scientific principles, that the risk of identifying an individual is very small. This method allows more nuanced de-identification and often retains more statistical utility in the data.

Recommended Tools for PHI Detection:

- AWS Comprehend Medical Detects PHI entities in unstructured clinical text with high accuracy

- Microsoft Presidio, an open-source PHI detection and anonymization framework

- Google Cloud Healthcare API supports PHI de-identification for FHIR, DICOM, and HL7v2 data

- Philter, an open-source clinical NLP tool specifically designed for PHI filtering in clinical notes

Risk Area 2: Third-Party AI APIs Without BAAs

Healthcare software development teams frequently integrate large language model APIs, such as OpenAI, Google Gemini, Anthropic Claude, into clinical workflows such as automated note summarization, patient communication drafting, or diagnostic support chatbots. When patient data is passed to these APIs without an appropriate BAA, a HIPAA violation occurs with every single API call.

| AI API Provider | HIPAA BAA Available | Product Covered | Notes |

| AWS Bedrock | ✅ Yes | Claude, Llama, Titan models on Bedrock | Enterprise agreement required |

| Azure OpenAI | ✅ Yes | GPT-4, GPT-4o on Azure | BAA is included in the Azure Enterprise Agreement |

| Google Vertex AI | ✅ Yes | Gemini models on Vertex AI | Separate from Google Workspace BAA |

| OpenAI API (Standard) | ❌ No | GPT-4, o1, o3 direct API | Consumer API — NOT HIPAA eligible |

| Google Gemini API (Standard) | ❌ No | Gemini Pro/Flash direct API | Consumer API — NOT HIPAA eligible |

| Anthropic API (Standard) | ❌ No | Claude direct API | Consumer API — NOT HIPAA eligible |

The practical implication: you can use the same AI models (GPT-4, Claude, Gemini) in HIPAA-compliant healthcare applications, but you must access them through the cloud provider’s managed service (Azure OpenAI, AWS Bedrock, Google Vertex AI) with an active BAA, not through the direct consumer API.

Risk Area 3: Inadequate Audit Logging for AI Actions

The HIPAA Security Rule requires covered entities and business associates to implement hardware, software, and/or procedural mechanisms that record and examine activity in information systems that contain or use ePHI. In traditional software, this means logging who accessed which patient record and when. In AI systems, the logging requirements are significantly more complex.

Every AI model query that involves PHI must be logged. This includes the identity of the user or system that initiated the query, a reference to what data was sent to the model, the model version that processed the request, the timestamp and duration of processing, and the nature of the output generated.

What AI-Specific Audit Logs Must Capture:

- User or service account ID that initiated the AI request

- Timestamp of request initiation and completion

- Reference ID for the patient data included in the request (not the data itself in the log)

- AI model name and version number that processed the request

- Type of AI action performed (summarization, coding, classification, generation)

- Access control decision, which role authorized this query

- Output disposition, where the AI response was stored or transmitted

Risk Area 4: AI Model Outputs Containing PHI

Healthcare AI systems generate text, structured data, and recommendations based on patient information. The outputs of these systems can themselves constitute PHI, and they require the same protection as the input data. This is a compliance area that many organizations overlook until an incident occurs.

A clinical documentation AI that generates patient visit summaries is producing PHI. A diagnostic support system whose output includes a patient’s name and condition is producing PHI. An AI scheduling system that generates appointment confirmations referencing a patient’s upcoming procedure is producing PHI. All of these outputs must be encrypted, access-controlled, logged, and subject to the same retention and disposal policies as other PHI.

An additional and increasingly relevant risk is AI output that inadvertently reproduces PHI from context. Large language models used in clinical chatbots can repeat patient-specific information from their conversation history in responses to subsequent queries, particularly in multi-turn conversations. Building PHI scrubbing and output filtering as a post-processing layer before any AI response reaches the end user is not optional; it is a security requirement.

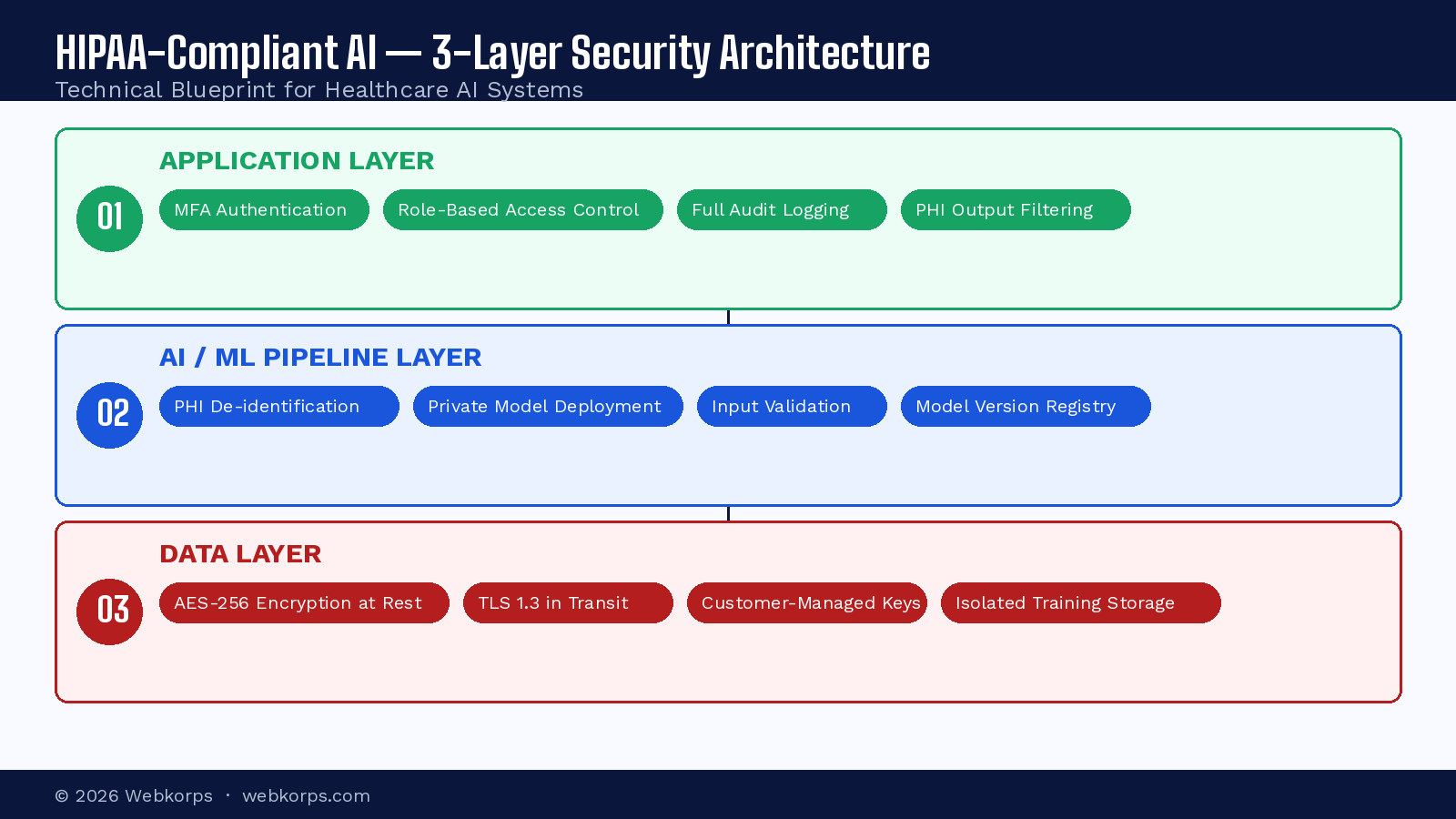

Technical Architecture for HIPAA-Compliant AI Software

Building a HIPAA-compliant AI system requires deliberate architectural decisions at every layer of the stack. The following framework, based on a 3-layer security model, provides a blueprint for development teams building AI software for healthcare environments.

Layer 1: Data Layer – Protect PHI at the Source

- AES-256 encryption at rest for all databases, storage buckets, and file systems containing PHI

- TLS 1.2 or higher for all data in transit, no exceptions

- Customer-managed encryption keys (CMK) via AWS KMS or Azure Key Vault

- Field-level encryption for the most sensitive PHI fields (SSN, diagnosis codes)

- Data access controls: Role-Based Access Control (RBAC) with minimum necessary access

- De-identified training datasets are stored in separate, isolated storage from production PHI

Layer 2: AI/ML Pipeline Layer – Secure the Intelligence

- PHI de-identification is applied before any data enters the model training pipeline

- Private model deployment – no shared multi-tenant inference environments for PHI processing

- Model artifact encryption – model weights trained on PHI should be encrypted at rest

- Model versioning and registry (MLflow, DVC) for an audit trail of which model version processed which data

- Input validation and PHI detection before model inference

- Output filtering: PHI scrubbing on all model-generated content before delivery

Layer 3: Application Layer – Control Access and Visibility

- Multi-factor authentication (MFA) is required for all users with access to PHI-processing AI features

- Session management: automatic logout after inactivity, concurrent session controls

- Comprehensive audit logging of every AI query involving PHI (see Risk Area 3 above)

- API gateway with authentication and rate limiting for all AI service endpoints

- Role-based UI controls – clinical users see different AI features than billing staff

- Secure output storage and transmission for all AI-generated PHI

Encryption Requirements in Detail

| Encryption Requirement | Standard | Implementation Example |

| Data at rest — databases | AES-256 | AWS RDS encryption, Azure SQL TDE |

| Data at rest — file storage | AES-256 | S3 SSE-KMS, Azure Blob Storage encryption |

| Data in transit | TLS 1.2 minimum (TLS 1.3 recommended) | ACM certificates, Azure API Management |

| Encryption key management | Hardware Security Module (HSM) | AWS KMS, Azure Key Vault, Google Cloud KMS |

| AI model artifacts (if PHI-trained) | AES-256 | Encrypted S3 bucket with CMK |

| Backup data | AES-256 (same as primary) | Encrypted automated backups |

De-Identifying PHI for AI Training – Step by Step

- Extract the raw dataset from your EHR, data warehouse, or clinical system using a secure, access-controlled extraction process. Document every data source and field included.

- Run automated PHI detection across the full dataset using AWS Comprehend Medical, Microsoft Presidio, or equivalent tools. Flag all identified PHI instances for review.

- Apply the Safe Harbor method: remove or transform all 18 HIPAA-identified categories. For dates, generalize to year only or age ranges. For ZIP codes, use the first 3 digits only if the population exceeds 20,000.

- Conduct a human expert review of flagged edge cases and a random sample of the de-identified output to catch automated detection failures – particularly in unstructured clinical text.

- Store the de-identified training dataset in an isolated storage environment with separate access controls from production PHI storage. Treat it as sensitive even after de-identification.

- Document the entire de-identification process – methods used, tools applied, expert qualifications, review procedures, and residual risk assessment. This documentation is required for HIPAA audit defense.

- Implement ongoing monitoring: as new training data is added, it must pass through the same de-identification pipeline before use. This is not a one-time process.

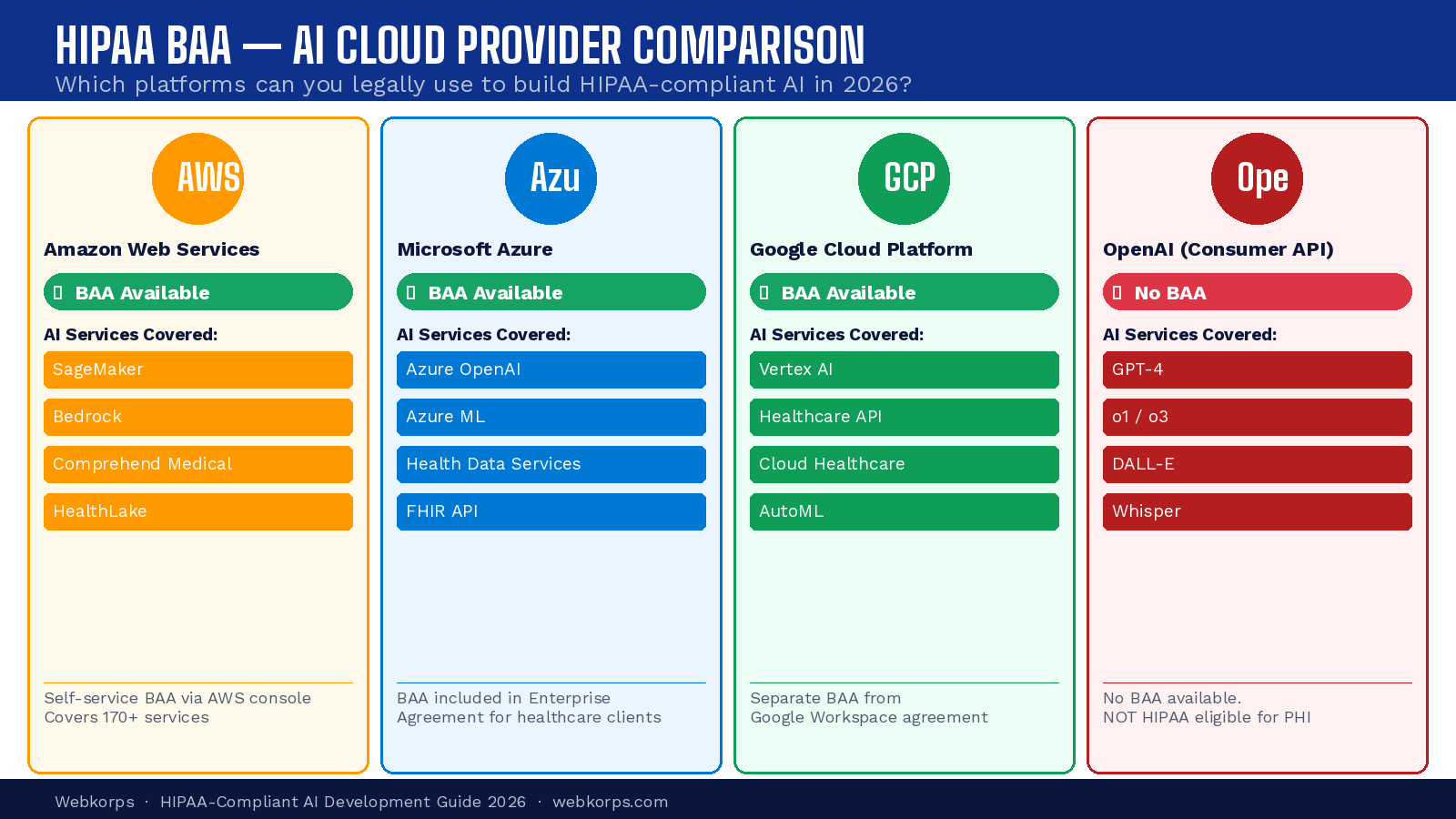

HIPAA-Compliant Cloud Infrastructure Comparison

| Cloud Provider | HIPAA BAA Available | Key AI Services (BAA Covered) | HIPAA Docs |

| Amazon Web Services (AWS) | ✅ Yes | SageMaker, Bedrock, Comprehend Medical, HealthLake | AWS BAA & HIPAA Whitepaper |

| Microsoft Azure | ✅ Yes | Azure OpenAI, Azure ML, Azure Health Data Services | Azure HIPAA Blueprint |

| Google Cloud Platform | ✅ Yes | Vertex AI, Healthcare API, Cloud Healthcare Data Store | Google Cloud BAA |

| OpenAI (Consumer) | ❌ No | N/A — consumer API not HIPAA eligible | Not applicable |

| Snowflake | ✅ Yes | Data warehouse for healthcare analytics | Snowflake BAA |

AI Model Governance and Version Control

A requirement that is frequently overlooked in healthcare AI development is model governance, the documentation and tracking of which model version made which clinical decision. From a HIPAA perspective, this is part of the audit trail requirement. If a model generates an incorrect output that leads to a care decision, you must be able to identify exactly which model version was responsible, what data it processed, and what it produced.

- Use a model registry (MLflow, DVC, AWS SageMaker Model Registry) to version and track every model deployed in production.

- Maintain model cards for each deployed version: training data sources, de-identification approach, performance metrics, known limitations, and access control settings.

- Implement a model change management process: any update to a model that processes PHI should trigger a re-assessment of compliance risk before deployment.

- Retain model artifacts and associated documentation for a minimum of 6 years to align with HIPAA’s documentation retention requirements.

Administrative & Organizational Safeguards – HIPAA Compliance Is Not Just a Tech Problem

Technical architecture addresses only one dimension of HIPAA compliance. The Security Rule explicitly requires administrative safeguards – policies, procedures, and organizational controls that govern how PHI is managed across your entire organization. For AI projects, these administrative requirements have specific implications that extend well beyond the clinical or compliance team.

HIPAA Risk Assessment for AI Projects

Before deploying any AI system that touches PHI, HIPAA mandates a formal Security Risk Analysis — a thorough assessment of the potential risks and vulnerabilities to the confidentiality, integrity, and availability of ePHI. This is not optional, and the absence of a documented risk assessment is itself a compliance violation.

Your AI-specific risk assessment must address:

- What PHI the AI system accesses, processes, or generates

- What threats exist to that PHI in the AI context (model attacks, prompt injection, API misuse)

- What vulnerabilities exist in the AI pipeline and supporting infrastructure

- The likelihood and impact of each identified threat exploiting each vulnerability

- Current security measures and their effectiveness

- A risk mitigation plan for each identified risk

The risk assessment must be repeated whenever a significant change occurs – a new AI model is deployed, a new vendor is integrated, or the system’s data access is expanded.

Business Associate Agreement (BAA) Checklist for AI Vendors

When evaluating AI software vendors, cloud providers, or any third party that will process PHI on your behalf, the following 10-point BAA checklist should be applied before signing any contract.

- The vendor explicitly offers and will sign a HIPAA Business Associate Agreement.

- The BAA explicitly covers AI model training – the vendor cannot use your PHI to train their general-purpose models.

- The BAA specifies data retention and deletion timelines – how long does the vendor retain your data, and how is it deleted?

- The BAA includes a subcontractor disclosure requirement – the vendor must identify and ensure compliance of any subprocessors handling your PHI.

- The BAA defines breach notification obligations – the vendor must notify you within a specified timeframe (HIPAA requires you to notify HHS within 60 days of discovery).

- The BAA addresses data portability – you can export your data in a standard format if you terminate the relationship.

- The BAA prohibits the vendor from combining your PHI with data from other covered entities.

- The vendor agrees to HIPAA Security Rule safeguards for all systems handling your PHI -including their AI training infrastructure.

- The BAA covers the specific AI services you intend to use – a general cloud BAA may not cover AI-specific services (e.g., Azure’s BAA covers Azure OpenAI separately from Azure Blob Storage).

- The vendor can provide evidence of HIPAA compliance – SOC 2 Type II reports, third-party security audits, or equivalent documentation.

HIPAA Training for AI Development Teams

One of the most consistently overlooked administrative requirements in healthcare AI projects is HIPAA training for the technical team. The Security Rule requires that all members of the workforce receive training on HIPAA policies and procedures to the extent necessary and appropriate for them to carry out their functions. For developers, data scientists, and ML engineers working with PHI, this obligation is substantial.

Training must cover AI-specific HIPAA risks, including:

- Proper handling of PHI in development environments – not using real patient data in local development or testing

- Secure management of API credentials and access keys for PHI-processing systems

- Recognition and reporting of security incidents involving AI systems

- Prompt injection risks and why AI input validation matters for PHI security

- Proper procedures for PHI encountered during model development or debugging

Training records must be documented and retained for a minimum of 6 years. This applies to every team member who has access to PHI or PHI-processing systems – including contractors and offshore development partners.

Incident Response Plan for AI Systems

HIPAA requires covered entities and business associates to have documented procedures to respond to suspected or known security incidents. For AI systems, the incident response plan must address scenarios that are unique to AI-powered software.

| AI-Specific Incident Type | Example Scenario | Immediate Response Action |

| Model inversion attack | Attacker extracts training data from the model | Isolate model, assess PHI exposure, initiate breach assessment |

| Prompt injection | Malicious input causes the model to output PHI | Log and quarantine session, assess output exposure, patch input validation |

| API misconfiguration | PHI-processing endpoint exposed without auth | Disable endpoint immediately, assess access logs, notify CISO |

| Vendor breach | AI vendor reports PHI data breach | Obtain the vendor’s breach report, assess your exposure, and prepare HHS notification |

| Training data leakage | De-identified dataset found to contain PHI | Pause model training, quarantine dataset, initiate de-identification review |

| Unauthorized model access | Internal user accesses the AI system outside their role | Revoke access, review audit logs, and assess PHI accessed |

The Real Cost of Non-Compliant Healthcare AI – HIPAA Penalties in 2026

The financial consequences of HIPAA violations have escalated dramatically over the past decade, and regulators have demonstrated a clear willingness to pursue enforcement actions against technology vendors and software developers – not just covered entities like hospitals and insurers.

HIPAA Civil Penalty Structure

| Violation Category | Per Violation | Annual Cap | Typical Trigger |

| Unknowing (no knowledge) | $100 – $50,000 | $25,000 | Technical misconfiguration without awareness |

| Reasonable Cause (knew or should have known) | $1,000 – $50,000 | $100,000 | Inadequate risk assessment or vendor oversight |

| Willful Neglect – Corrected within 30 days | $10,000 – $50,000 | $250,000 | Known violation, corrected after discovery |

| Willful Neglect – Not Corrected | $50,000 | $1.5 million | Persistent violation without remediation |

Recent AI-Related HIPAA Enforcement Patterns

A review of recent OCR enforcement actions reveals three consistent patterns in AI-related HIPAA violations:

- Vendor Management Failures: The majority of large penalty cases involve a covered entity using a technology vendor – often an AI or software vendor – without an adequate BAA. The covered entity is penalized for its vendor’s non-compliant handling of PHI.

- Risk Assessment Gaps: Organizations that deploy new technology – including AI systems – without conducting a HIPAA Security Risk Analysis are consistently penalized, even when no actual breach of PHI occurred. The absence of the risk assessment itself is a violation.

- Training Deficiencies: Findings that workforce members – including developers and IT staff – did not receive HIPAA training appear in a significant proportion of enforcement actions, often compounding penalties in multi-issue cases.

Beyond civil penalties, HIPAA violations can trigger criminal charges for knowing violations, exclusion from Medicare and Medicaid programs, reputational damage that affects patient trust, and class action litigation from affected patients. For healthcare AI vendors, a HIPAA enforcement action can be existential.

HIPAA-Compliant AI Software Development Checklist – 2026

Use this four-phase checklist to validate your compliance posture at each stage of your AI software development lifecycle. This checklist is designed for teams building AI systems that process, transmit, or generate PHI.

Phase 1: Before Development Starts

- Conduct a formal HIPAA Security Risk Analysis – document all PHI the AI system will access, all threats, all vulnerabilities, and your mitigation plan.

- Identify and sign BAAs with every vendor whose services will touch PHI – cloud providers, AI API providers, data labeling partners, and monitoring tools.

- Define and document the minimum necessary PHI required for the AI system to function – don’t build with broader access than needed.

- Designate a HIPAA Security Officer for the project – a named individual responsible for compliance decisions and documentation.

- Establish a separate, isolated development and testing environment with synthetic or properly de-identified data – no real PHI in dev/test environments.

- Train all project team members (developers, data scientists, ML engineers, QA, DevOps) on HIPAA requirements specific to their roles.

Phase 2: During Development

- De-identify all training data using Safe Harbor or Expert Determination method before any ML model training begins – document the process.

- Implement AES-256 encryption at rest for all storage systems containing PHI or PHI-derived training data.

- Implement TLS 1.2 or higher for all data in transit – enforce at the API gateway and service mesh level.

- Configure Role-Based Access Control (RBAC) for all AI services – minimum necessary access for all service accounts and user roles.

- Build comprehensive audit logging into every application component that accesses or generates PHI.

- Implement input validation and PHI detection on all data entering the AI model inference pipeline.

- Implement output filtering and PHI scrubbing on all AI-generated content before it reaches end users.

- Configure customer-managed encryption keys (CMK) via AWS KMS, Azure Key Vault, or Google Cloud KMS.

- Set up model versioning and a model registry – every model deployed to process PHI must be versioned and documented.

Phase 3: Before Launch

- Conduct a penetration test with healthcare-specific attack scenarios – prompt injection, model inversion attempts, API authentication bypass.

- Complete and document the Security Risk Analysis, including review of technical controls implemented during development.

- Review and verify all BAAs are in place, current, and cover the specific AI services being used in production.

- Test audit logs for completeness – verify that every PHI access scenario generates the required log entries.

- Conduct a tabletop exercise for the AI-specific incident response plan – ensure the team can execute breach response procedures.

- Perform a final review of all third-party integrations for BAA coverage and HIPAA compliance documentation.

Phase 4: Post-Launch & Ongoing Compliance

- Monitor AI system access logs continuously – implement automated alerting for anomalous access patterns.

- Re-conduct the Security Risk Analysis whenever a significant change occurs: new model deployment, new data source, new vendor integration, or infrastructure change.

- Maintain all HIPAA compliance documentation – policies, training records, risk assessments, BAAs, audit reports – for a minimum of 6 years.

- Conduct annual HIPAA training refreshers for all team members with PHI access.

- Review and update the AI model governance documentation – model cards, version history, training data provenance – with every model update.

- Monitor HHS guidance and OCR enforcement updates for new AI-specific compliance requirements – the regulatory landscape continues to evolve.

Why Healthcare Companies Choose Webkorps for HIPAA-Compliant AI Development

Building AI software that is genuinely HIPAA-compliant – not just superficially so – requires deep expertise that sits at the intersection of healthcare regulatory knowledge, AI/ML engineering, and enterprise security architecture. Most development teams have one or two of these capabilities. Building HIPAA-compliant healthcare AI requires all three.

Webkorps has been delivering custom AI and software solutions for healthcare clients for over 8 years, across 30+ countries, and with 350+ satisfied clients. Our healthcare AI development practice is built on a compliance-first engineering model, which means HIPAA safeguards are designed into the architecture from day one – not layered on as an afterthought after the system is built.

What Webkorps handles that most development shops don’t:

- BAA management and vendor assessment – we handle BAA negotiation and compliance verification for every third-party AI service in your stack.

- PHI pipeline design – we architect data flows that enforce de-identification, minimum necessary access, and audit logging at the infrastructure level, not just the application level.

- AI-specific security architecture – our engineers implement model input validation, output filtering, and PHI scrubbing as standard components of every healthcare AI system we build.

- Compliance documentation – we deliver full HIPAA risk assessment documentation, model governance records, and audit trail configuration as part of every project.

- Ongoing compliance support – HIPAA compliance for AI is not a one-time event. We provide post-launch monitoring, risk assessment updates, and model governance support as your system evolves.

Ready to Build HIPAA-Compliant AI for Healthcare?

Webkorps specializes in custom HIPAA-compliant AI software development for healthcare organizations. Schedule a free compliance consultation with our healthcare technology experts, or visit webkorps.com/contact or email us at contact@webkorps.com

Conclusion

HIPAA compliance for AI software is not a checklist item that can be delegated to a legal team or resolved by selecting a HIPAA-compliant cloud region. It is a technical and organizational discipline that must be embedded into every layer of how your AI system is designed, built, deployed, and maintained.

The organizations that get this right share a common characteristic: they treat compliance as an engineering requirement from the very first architecture decision, not as an audit exercise before launch. They de-identify training data before model training begins. They sign BAAs before the first API call. They build audit logging into the infrastructure, not the application. They train developers on HIPAA, not just clinical staff. And they plan for breach response before they have anything to breach.

The cost of getting it wrong – $10.9 million on average per healthcare data breach, plus regulatory penalties, reputational damage, and the human cost of compromised patient trust – is simply too high in a domain where data is as sensitive as it gets.

Build it right from the beginning. The patients whose data powers your AI deserve nothing less.

Frequently Asked Questions

Is ChatGPT or GPT-4 HIPAA compliant?

ChatGPT and the standard OpenAI API are not HIPAA-compliant for healthcare applications involving PHI. OpenAI does not offer a Business Associate Agreement (BAA) for its consumer-facing products or standard API. However, GPT-4 and other OpenAI models can be used in HIPAA-compliant applications if accessed through Microsoft Azure OpenAI Service, which is covered under Azure’s HIPAA BAA. The model is the same; the deployment environment and legal agreement make the difference.

Can I use AWS or Google Cloud for healthcare AI without a BAA?

No. Using AWS or Google Cloud to store, process, or transmit PHI without an active, signed Business Associate Agreement from the provider is a HIPAA violation, regardless of what encryption or security controls you have configured. Both AWS and Google Cloud offer BAAs – AWS through its self-service BAA process, and Google Cloud through its standard customer agreements. The BAA must be signed before any PHI is placed in the cloud environment.

How long does it take to build a HIPAA-compliant AI system?

The timeline varies significantly based on complexity. A focused AI feature (e.g., an AI-powered clinical documentation assistant integrated into an existing EHR) typically takes 4–6 months when compliance is built in from the start. A comprehensive AI platform for healthcare (covering multiple clinical workflows, full data pipelines, and extensive compliance documentation) may take 9–18 months. The most common timeline-extension factor is organizations that begin development without a compliance-first approach and then require significant remediation mid-project.

What is the difference between HIPAA-compliant and HIPAA-certified?

There is no official HIPAA certification. HIPAA does not have a government-recognized certification program – any vendor claiming to be ‘HIPAA certified’ is using a marketing term, not a regulatory designation. What matters for HIPAA compliance is whether the organization has implemented all required administrative, physical, and technical safeguards, has conducted a Security Risk Analysis, has signed appropriate BAAs, and has documented its compliance program. Third-party audits and certifications like SOC 2 Type II can provide evidence of security controls, but they do not constitute HIPAA certification.

Do I need to de-identify data even if it’s stored on a HIPAA-compliant server?

Yes, for AI model training. Even if your storage is HIPAA-compliant (encrypted, access-controlled, covered by a BAA), using identifiable PHI to train an AI model creates additional risks that HIPAA’s existing framework was not designed to address – specifically, the risk of training data memorization and model inversion attacks. De-identified data used for training is no longer PHI and is therefore outside HIPAA’s scope, which dramatically simplifies your compliance obligations for the training pipeline. De-identification for training is a best practice that most experienced healthcare AI developers now treat as a requirement.

What happens if my AI vendor has a data breach?

If your AI vendor is a Business Associate and they experience a breach of your PHI, they are required to notify you promptly – typically within a timeframe specified in the BAA, and no later than what allows you to meet your own 60-day HHS notification requirement. You, as the covered entity, remain responsible for notifying HHS and affected individuals. This is why the BAA must clearly specify the vendor’s breach notification obligations, and why maintaining an AI-specific incident response plan – including the scenario of a vendor breach – is essential.

How much does HIPAA-compliant AI software development cost?

The cost of building HIPAA-compliant AI software depends on scope, complexity, and the development partner’s approach. As a general framework, compliance infrastructure (encryption, logging, access controls, BAA management) typically adds 20–35% to baseline development costs when built correctly from the start. When retrofitted after development, compliance remediation typically costs 60–100% of the original development budget. A focused HIPAA-compliant healthcare AI feature from a qualified development partner might start at $80,000–$150,000. Enterprise healthcare AI platforms with comprehensive compliance frameworks range from $300,000 to $1 million or more. The appropriate response to cost concerns is to compare these numbers to the average cost of a healthcare data breach: $10.9 million.